AI Writes Code That Looks Right Until it Starts Missing Context

- Black Duck observes that AI-generated code fails due to missing system and business context.

- Human oversight in AI-driven development isn’t a yes-or-no question. It’s about placing judgment where it matters most.

- AI assistants have moved from experimentation to default tooling because they remove friction.

- Rather than writing every line of code, engineers are becoming reviewers, focusing on decisions.

- Schmitt says that the emerging best practice is “humans in the loop”, not “in the way.”

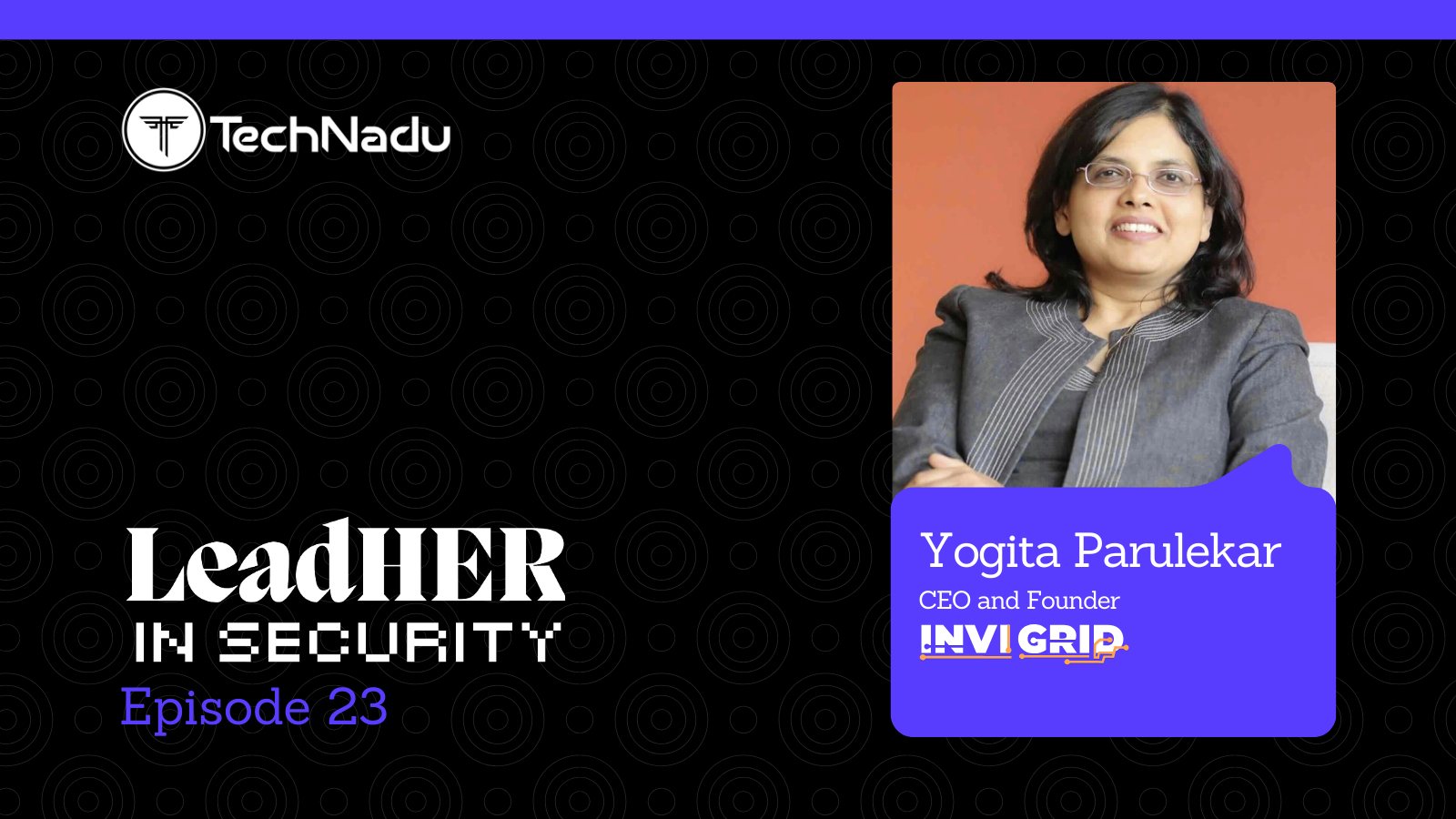

Jason Schmitt, CEO of Black Duck, explains how AI-generated code fails when it operates without complete awareness of the systems it runs in.

AI can generate functional code, clear tests, follow patterns, and appear secure. But it does not know your threat model. When AI-generated code fails on logic, it’s rarely due to obvious mistakes.

The tradeoffs built into your architecture, or the decisions your teams have already made about risk, are not fully known to AI. Dependency issues are easier to detect, so they get more attention. But they’re often a result of missing context. Dependencies end up ignoring past lessons.

Adoption is faster than security today, but that can be fixed with governance, not by slowing down. The organizations that succeed will match their security to AI’s speed.

Vishwa: What’s the worst real-world failure you’ve seen from AI-generated code so far?

Jason: The worst failures I’ve seen from AI-generated code don’t come from obvious bugs—they come from missing context. Modern language models excel at reproducing common coding patterns, which is why they can generate clean, functional code and flag well-known vulnerabilities. And they will confidently tell you that it is perfectly functional and secure, even when it’s not! The problem is what they don’t know.

They lack awareness of your threat model, the historical triage decisions your teams have made, or why certain risks were accepted, deferred, or revisited. They don’t know the architectural tradeoffs baked into your systems, the business priorities shaping acceptable risk, or what’s actively on fire in production right now. They think they know your business logic from what they infer, but can’t have full knowledge of that without real context.

When AI-generated code fails in the real world, it’s usually because it made a decision that looked reasonable in isolation but was wrong in your environment—an authentication check that quietly fails open, a dependency choice that ignores past supply chain lessons, or a “fix” that contradicts hard-won operational experience. Remember that LLMs are trained to please the end user, so incomplete inputs can result in wildly divergent assumptions.

Attempts to close these gaps with finetuning or clever prompting tend to be brittle and expensive, because organizational reality changes faster than models can be retrained. This isn’t a model capability problem—it’s a context problem. Securing AI-driven development isn’t about making the model smarter; it’s about ensuring the right security context is present at the exact moment decisions are made.

Vishwa: Do you believe that companies are deploying insecure AI-written code into production?

Jason: Companies are absolutely deploying insecure AI-written code into production, and not because they’re reckless, but because AI is accelerating development faster than most security programs are designed to absorb.

AI assistants and agents have dramatically reduced the time and skill it takes to create and change software. That’s a productivity win, but it also means more code is being shipped with less scrutiny per change. The news is full of stories of non-technical users producing sophisticated apps for real-world use cases, yet they have no understanding of security implications of their choices.

When release velocity increases while security review, testing, and triage still scale linearly, developers compensate in predictable ways: reviews get deferred, exceptions widen, teams rely on downstream testing, and risk gets rationalized as “we’ll clean it up later.” That’s if they even know to have these controls in place.

The net effect is that insecure code doesn’t need to sneak past controls—it gets pushed through by schedule pressure and limited security throughput.

The real risk isn’t about AI writing “bad code.” It’s that AI writes plausible code—code that reads well, passes basic tests, and appears idiomatic, and satisfies the end user—without the organizational context that experienced engineers rely on:

- threat models,

- architectural constraints,

- historical risk decisions, and

- current operational reality.

- When velocity outpaces that context, security gaps follow.

For security and business leaders, the answer isn’t banning or limiting access to AI—it’s governing it deliberately. Assume AI is already embedded in your SDLC and build controls accordingly. Shift security context closer to where decisions are made:

- inside IDEs,

- pull requests, and

- CI pipelines—not weeks later in a backlog.

Standardize “trust gates” for high-risk changes like authentication flows, cryptography, IAM, deserialization, and dependency additions. Focus on signal quality, not scan volume— teams drowning in noise will route around security every time.

Agentic tools deserve particular attention. When an AI system can make changes at scale, govern it like production access:

- least privilege by default,

- clear audit trails,

- scoped permissions, and

- well-defined rollback paths

Just as importantly, governance has to be measurable. Track indicators reflecting both adoption and risk:

- AI-assisted code volume,

- time for changes to pass security gates,

- secrets detection frequency,

- new dependency introductions and blocks, and

- whether high-risk changes merge without enhanced review.

- If you can't measure it, you can't govern it credibly.

The companies that will get this right aren’t the ones trying to slow innovation—they’re the ones putting the right guardrails, accountability, and security context at the exact moment code is created and changed. That is the real unlock for the productivity promises of AI.

Vishwa: Is fully autonomous coding dangerous at this stage?

Jason: Absolutely—primarily because decision-making accountability and sufficient context aren't there yet.

Autonomous coding systems can generate large volumes of clean, plausible code and even fix issues at speed. What they can’t reliably do is understand why a decision should or shouldn’t be made in a specific organization:

- your threat model,

- past risk acceptances,

- architectural constraints,

- regulatory exposure, or

- what’s currently fragile in production.

- When an autonomous system optimizes for correctness or velocity without that context, it can make locally “reasonable” decisions with systemwide consequences.

An autonomous agent that can change dependencies, refactor auth logic, or automerge fixes across many services can propagate a flawed assumption at machine speed. Humans make mistakes too, but they do so more slowly, with implicit judgment shaped by experience and accountability. Fully autonomous systems lack that braking mechanism.

That doesn’t mean autonomy has no place. Narrow, well-scoped autonomy (e.g., test generation or suggesting fixes) is already delivering real value. The danger comes when autonomy extends into security-critical or business-critical decisions without guardrails, especially when there’s no human-in-the-loop and no clear rollback path.

The near-term model that works is constrained autonomy with strong governance:

- least privilege access,

- clear trust boundaries,

- mandatory review for high-risk changes,

- auditable actions, and

- measurable outcomes

- As long as autonomous systems are operating within those constraints, they can safely accelerate development.

When they’re allowed to operate independently of context, history, and accountability—that’s when they become risky.

Vishwa: Where does AI fail most—logic, dependencies, or security context?

Jason: Security context—and logic and dependency issues usually stem from that gap.

Modern models are very good at producing syntactically correct, idiomatic code. Where they struggle is understanding organizational context—your threat model, architectural constraints, historical risk decisions, regulatory exposure, and what’s currently fragile in production.

That’s why the most serious failures tend to involve authentication or authorization logic that technically exists but fails open, cryptographic use that is correct in isolation but wrong for the trust boundary, or “reasonable” fixes that contradict prior, intentional security decisions.

These aren’t bugs caused by ignorance of programming—they’re caused by lack of situational awareness. The model optimizes for plausibility, not for appropriateness in your environment.

When AI-generated code fails on logic, it’s rarely due to obvious mistakes. Instead, it’s edge cases, missing invariants, or business rule assumptions that don’t hold under real conditions.

Dependency issues get a lot of attention because they’re easy to detect:

- outdated libraries,

- risky licenses,

- unmaintained packages, or

- overly broad imports for simple needs.

And while dependency risk is real, it’s usually a symptom of missing context rather than a standalone failure.

Vishwa: Are AI coding tools being adopted faster than they can be secured?

Jason: Yes—and the mismatch is creating significant governance gaps.

AI assistants have moved from experimentation to default tooling remarkably fast because they remove friction from everyday development. That bottom-up adoption curve is steep, while security programs, governance models, and risk controls still assume slower, more predictable change.

The mismatch shows up in three ways:

- Velocity outpacing control design. Code is being generated, modified, and merged faster than security review, policy enforcement, and threat modeling can realistically keep up. Many existing controls were built for human-driven workflows, not machine-scale throughput.

- Adoption before visibility. AI tools are widely used before security leaders have a clear inventory of where they're deployed, what data they access, or what decisions they influence. You can't govern what you can't see.

- Context lag. Security processes injecting necessary organizational awareness often happen too late, after code has already moved downstream.

That doesn’t mean teams should slow adoption. It means they need to reframe security for AI-accelerated development. The companies making progress are doing a few things differently:

- assuming AI is already in the SDLC,

- moving security guardrails closer to where code is written and reviewed,

- tightly governing high-risk changes and agent permissions, and

- measuring signal quality instead of scan volume.

Adoption is ahead of security—but that gap is a solvable governance problem, not a reason to pull the brakes. The winners will be the organizations that adapt their security models to AI’s speed, rather than trying to force AI back into yesterday’s workflows.

Vishwa: In practical terms, how is Black Duck’s “agentic AI security” different from other AI-security solutions in the market?

Jason: Traditional AI security tools typically use a single AI model or rule-based scanning engines. Black Duck Signal, on the other hand, deploys multiple specialized AI security agents that work together as a coordinated team. Each agent has a specific role—analyzing code, assessing exploitability, prioritizing risks, and recommending fixes—reasoning through issues with human-like logic.

The critical differentiator is ContextAI™—Black Duck's proprietary security model built on 20+ years of curated application security intelligence.

Traditional AI tools rely primarily on LLM pattern matching and generic training data, which can produce hallucinations and false positives. Signal, however, augments LLM analysis with battle-tested, human-vetted security intelligence and multiple analysis techniques to deliver verified analysis and exploitability data that other AI solutions can only guess at.

Signal integrates directly into modern agentic software development workflows through Model Context Protocol (MCP), APIs connecting to AI coding assistants (like Claude Code), IDE integrations, and automated AI development pipelines. Security analysis happens in real-time as AI generates code, not as an afterthought scan.

Additionally, Signal doesn't just find problems—it can automatically generate and apply fixes, extending your security team with role- and task-based agents that work autonomously.

While other AI security tools bolt AI capabilities onto traditional scanning approaches, Signal was purpose-built from the ground up for AI-generated code, combining the reasoning power of multiple specialized AI agents with decades of real-world security intelligence to deliver high-fidelity results without the noise and hallucinations common in general-purpose AI tools.

Vishwa: How much human oversight is realistically needed in AI-driven development?

Jason: The question of human oversight in AI-driven development isn't binary—it's about strategic placement of human judgment where it matters most.

We're seeing a fundamental shift in how developers work. Rather than writing every line of code, engineers are becoming architects and reviewers, focusing on high-value decisions while AI handles the repetitive, boilerplate work. This transition requires a "trust but verify" approach built on three pillars.

First, automated security and quality checks are non-negotiable. Solutions like Black Duck Signal provide continuous, intelligent oversight at a scale humans can't match—an always-on security expert reviewing every code change in real-time.

Second, risk-proportional oversight. Authentication systems, payment processing, and compliance-critical functions demand rigorous human review. Scaffolding code, documentation updates, and basic CRUD operations? AI can handle those with minimal supervision. Successful organizations clearly define these boundaries.

Third, context still requires human judgment. AI doesn't inherently understand your business logic, legacy system constraints, or nuanced requirements behind user stories. Developers remain essential for architectural decisions, requirement interpretation, and ensuring code aligns with broader system design.

The practical reality is that you can't—and shouldn't—eliminate human oversight entirely. When code ships with your company's reputation behind it, accountability matters. However, we can dramatically reduce manual review time by letting AI generate first drafts while humans focus on strategic decisions.

The emerging best practice is "humans in the loop, not in the way." Set up intelligent guardrails and automated checks so AI can work fast but require human approval at decision points that truly impact security, compliance, and business outcomes.

Organizations that get this balance right will see development velocity increase by orders of magnitude while maintaining—or even improving—code quality and security posture. The winners will be those who recognize that AI amplifies human expertise rather than replacing it.

Vishwa: What would a worst-case scenario look like if this trend continues unchecked?

Jason: The worst-case scenario isn't hypothetical—Black Duck's own research reveals we're already in it, and the trajectory is alarming. For instance, the 2026 Open Source Security and Risk Analysis (OSSRA) report, which examined 947 commercial codebases, found that vulnerabilities have more than doubled year-over-year—surging 107% to an average of 581 vulnerabilities per codebase.

This isn't gradual degradation; it's exponential risk acceleration. And the connection to AI is undeniable. Our research also found that 24.7% of AI-generated code contains confirmed security vulnerabilities. The pace at which software is created now exceeds the pace at which most organizations can secure it.

Here's where it compounds into crisis:

- only 24% of organizations perform comprehensive security,

- IP,

- license, and

- quality evaluations of AI-generated code.

Three-quarters of enterprises are essentially flying blind, accumulating security and legal debt they don't even know exists.

The supply chain dimension amplifies the problem. Our data reveals that 93% of codebases now contain "zombie" components—open source libraries with no development activity in over two years.

When new exploits emerge, there's no maintainer to issue patches. Meanwhile, the average project now contains 1,180 components, with 64% operating as transitive dependencies—inherited risks that developers never explicitly reviewed.

License exposure has also reached record levels:

- 68% of codebases contain license conflicts,

- a 12-percentage-point jump in a single year—the largest increase OSSRA has ever recorded.

AI models trained on public repositories can reproduce GPL or AGPL-licensed code without any indication of origin, creating invisible compliance gaps that surface catastrophically during M&A due diligence or regulatory audits.

If this continues unchecked, we're facing a systemic collapse scenario:

- organizations producing software faster than they can govern it,

- accumulating mountains of unpatched vulnerabilities in unmaintained dependencies, while AI silently introduces both security flaws and legal liabilities at scale.

The infrastructure supporting modern business becomes its greatest weakness—not because of sophisticated attacks, but because the foundation itself is fundamentally ungoverned.

The worst case isn't a single breach. It's an entire software ecosystem built on a foundation that was never actually secured.