Google Detects First Potentially AI-Generated Zero-Day Exploit

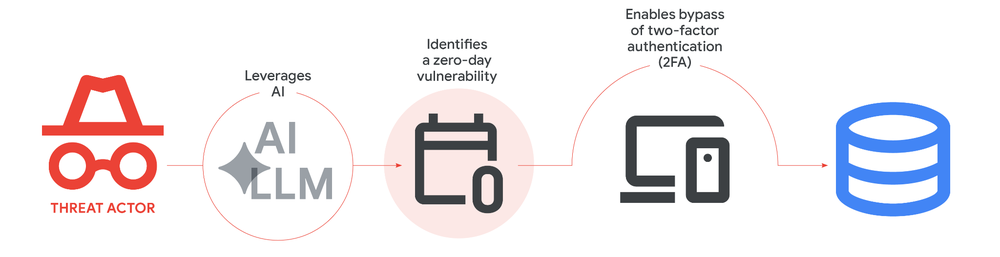

- Zero-day discovery: GTIG identified a criminal actor utilizing an AI-developed zero-day exploit for the first time.

- Exploitation event prevented: Proactive counter-discovery disrupted a planned mass compromise of an administrative tool.

- Autonomous malware operations: Adversaries are rapidly advancing AI-augmented malware development and autonomous execution frameworks.

Adversaries increasingly leverage artificial intelligence (AI) for vulnerability exploitation, augmented operations, and initial access. For the first time, the Google Threat Intelligence Group (GTIG) identified a criminal threat actor utilizing a zero-day exploit that researchers believe was developed with AI assistance. The report highlighted findings reflecting a maturing transition toward the industrial-scale application of generative models in adversarial workflows.

Zero-Day and State-Sponsored Threats

The planned campaign specifically targeted a popular open-source, web-based system administration tool. The zero-day vulnerability implemented in a Python script enabled the user to bypass two-factor authentication (2FA).

“It stems not from common implementation errors like memory corruption or improper input sanitization, but a high-level semantic logic flaw where the developer hardcoded a trust assumption,” according to the report.

Beyond vulnerability discovery, the report detailed a surge in AI-augmented malware development and autonomous attack orchestration. A primary example is PROMPTSPY, an Android backdoor first identified by ESET that facilitates autonomous malware operations by interpreting system states to dynamically generate commands without human supervision.

Furthermore, the report outlined sophisticated threat activity associated with the People's Republic of China (PRC) and the Democratic People's Republic of Korea (DPRK), focusing on AI-augmented vulnerability discovery workflows. Recently, suspected China-linked actor UNC2814 has sought vulnerability research, including for TP-Link firmware and Odette File Transfer Protocol (OFTP) implementations.

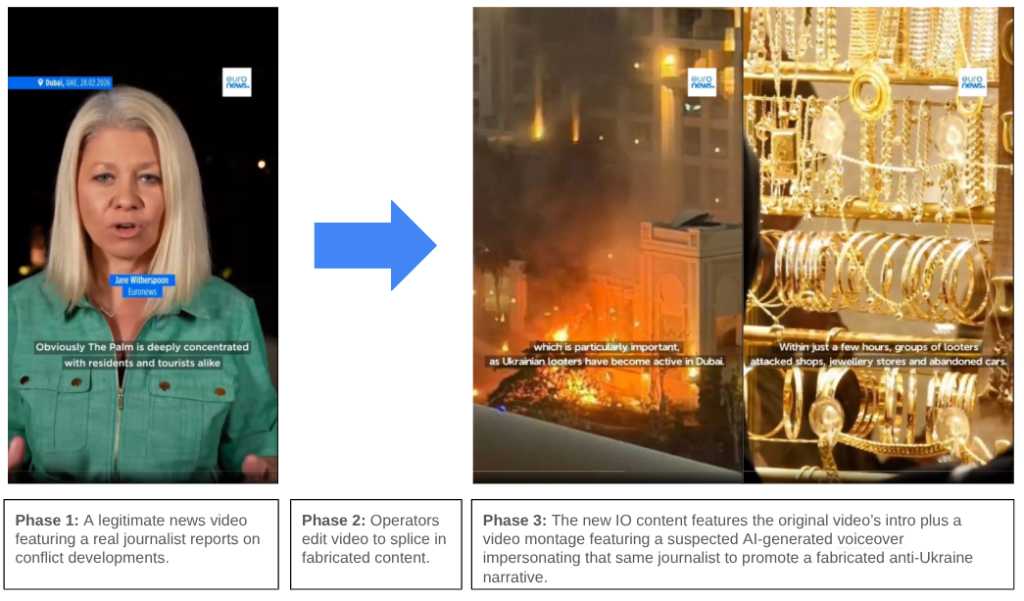

GTIG also observed Russia-nexus intrusion activity integrating AI-generated decoys into malware to evade defenses and obfuscate, as well as activity linked to the pro-Russia campaign “Operation Overload,” which leveraged suspected AI voice cloning to impersonate real journalists in videos.

“Actors are also experimenting with agentic tools such as OpenClaw and OneClaw alongside intentionally vulnerable testing environments,” the report mentioned.

Mitigation

Following the identification of this threat, GTIG collaborated directly with the impacted vendor to responsibly disclose the vulnerability. Researchers assess that this proactive counter-discovery likely prevented a mass exploitation event. While the exploit demonstrates advanced AI integration, GTIG explicitly noted that it does not believe the Google Gemini model was utilized in this specific case.

“Though frontier LLMs struggle to navigate complex enterprise authorization logic, they have an increasing ability to perform contextual reasoning, effectively reading the developer's intent to correlate the 2FA enforcement logic with the contradictions of its hardcoded exceptions,” GTIG said.

“This capability can allow models to surface dormant logic errors that appear functionally correct to traditional scanners but are strategically broken from a security perspective.”

In a recent interview with TechNadu, Bitdefender’s security analyst Alina Bizga warned that fraud becomes more accessible as AI tools and Scam-as-a-Service platforms enable coordinated campaigns.