8 Tech Giants’ CSAM Reporting Deficiencies Investigated Following NCMEC Documented Findings

- Congressional oversight: A systematic CSAM reporting investigation has been launched targeting eight major technology corporations for demonstrable failures in providing actionable child exploitation intelligence.

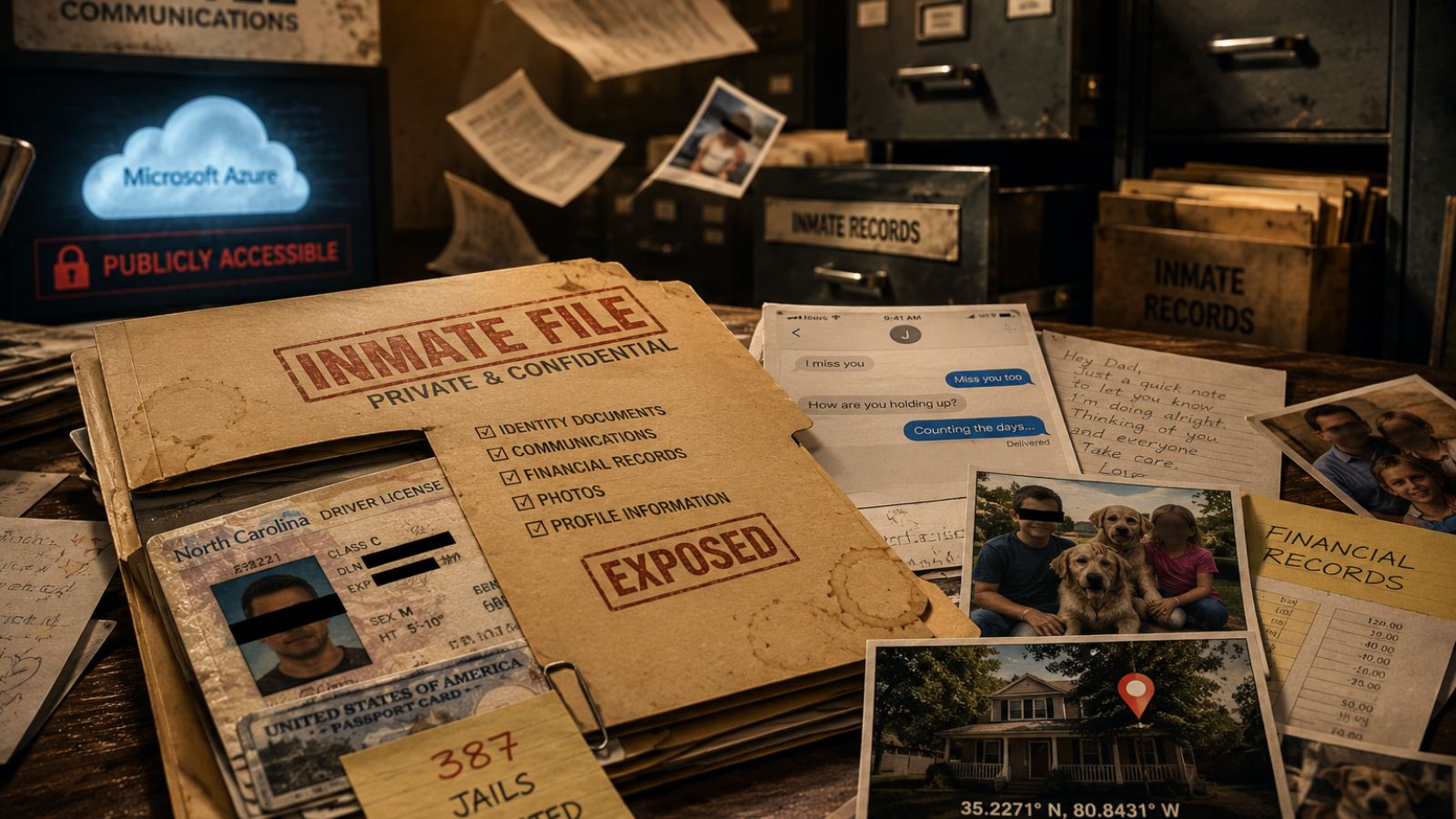

- Data quality deficiencies: Despite submitting millions of reports, platforms systematically exclude essential geolocation data and perpetrator identification details required for NCMEC CyberTipline operational effectiveness.

- Industry-wide scope: The Senator Grassley investigation encompasses Meta, TikTok, Amazon, and other major platforms regarding their child-safety protocol implementation and compliance standards.

Senate Judiciary Committee Chairman Chuck Grassley has initiated a comprehensive child sexual abuse material (CSAM) reporting investigation targeting eight major technology corporations, including Meta and more. The investigation stems from documented findings by the National Center for Missing and Exploited Children (NCMEC) demonstrating that these platforms systematically fail to provide sufficient intelligence when reporting CSAM incidents.

Although these entities collectively submitted approximately 17 million suspected online child exploitation reports to the NCMEC CyberTipline in 2025, millions of these submissions lack essential investigative components, a press release said.

Investigation Encompasses Major Technology Platforms

The eight industry-leading platforms targeted in the comprehensive Senator Grassley investigation and their most significant reporting issues are:

- Meta – Failures to escalate suicidal ideation by a child and “consistency and quality issues” with reports related to online enticement and child sex trafficking.

- Amazon AI Services – Failures to provide location or suspect information and transparently disclose or explain detection of CSAM in its AI training set.

- TikTok – Routinely reporting content unrelated to child exploitation, with occasional reports of CSAM.

- Snapchat – “Quality issues” with reports related to unsolicited obscene material sent to a minor, including failures to submit substantive information in reports involving chat.

- Discord – Failures to provide location or account information for individuals involved in reported chat logs and routine reports of adult and graphic gore content unrelated to CSAM.

- X.AI – Inactionable reports due to limited user information.

- Grindr – Routine failures to include critical location information in reports.

- Roblox – Failures to identify child victims in online chat reports and to regularly report incidents of sadistic online exploitation victimizing children.

The absence of fundamental data elements, including precise geolocation information and perpetrator identification parameters, renders these reports operationally ineffective.

The investigation also exposes significant concerns regarding inadequate reporting of sadistic exploitation content and the unregulated incorporation of such material within generative AI training datasets.

2025 Poor Reporting Companies

NCMEC said some companies that submit more than 100 CyberTipline reports provide incomplete information for a suspect or child victim, with reports containing actionable location information being:

- Amazon AI Services – 0% of 1.1 million reports.

- Grindr – only 4% of 111,000 reports.

- Invoke AI – 0% of 2,838 reports.

- Lightspeed Systems – 0% of 1,549 reports.

- Redgifs.com – 0% of 1,042 reports.

- Box Inc. – only 12% of 1,131 reports.

- Internet Archive – only 17% of 820 reports.

- Streamable Inc. – 39% of 1,596 reports.

- Zoom Video Communications Inc. – 45% of 768 reports.

Critical Implications for Tech Giants

Grassley has mandated that these eight corporations provide comprehensive responses to NCMEC's documented allegations. The platforms must submit detailed operational frameworks that specify their methodologies for enhancing internal reporting protocols and ensuring the delivery of actionable intelligence to the appropriate authorities within the current fiscal year.

Sadistic online exploitation groups, such as the 764 Network, have been known to entice children on Roblox to acts of self-harm, Grassley added in the press release.

In February, an alleged affiliate of the 764 network known online as Baggeth was arrested and charged with receiving CSAM, facing up to 20 years in prison, while another supposed member was arrested in April 2025.