GrafanaGhost Exploit Exfiltrates Sensitive Grafana Business Data via Indirect Prompt Injection

- Silent data breach: A newly observed Grafana vulnerability enables automatic, undetected data exfiltration without requiring user interaction or administrative authentication.

- Indirect prompt injection: Attackers bypass security guardrails by using specific keywords and protocol-relative URLs to manipulate the underlying AI model's behavior.

- Critical data exposed: This data exfiltration attack threatens highly sensitive enterprise telemetry, real-time financial metrics, and private customer information.

A critical Grafana flaw, dubbed the GrafanaGhost vulnerability, allows malicious actors to silently extract sensitive business data from the widely utilized open-source data visualization platform. The core of this Grafana data exfiltration attack relies entirely on indirect prompt injection.

Threat actors do not need compromised user credentials or successful phishing campaigns to execute the breach, but exploit how Grafana’s AI components process external context and instructions.

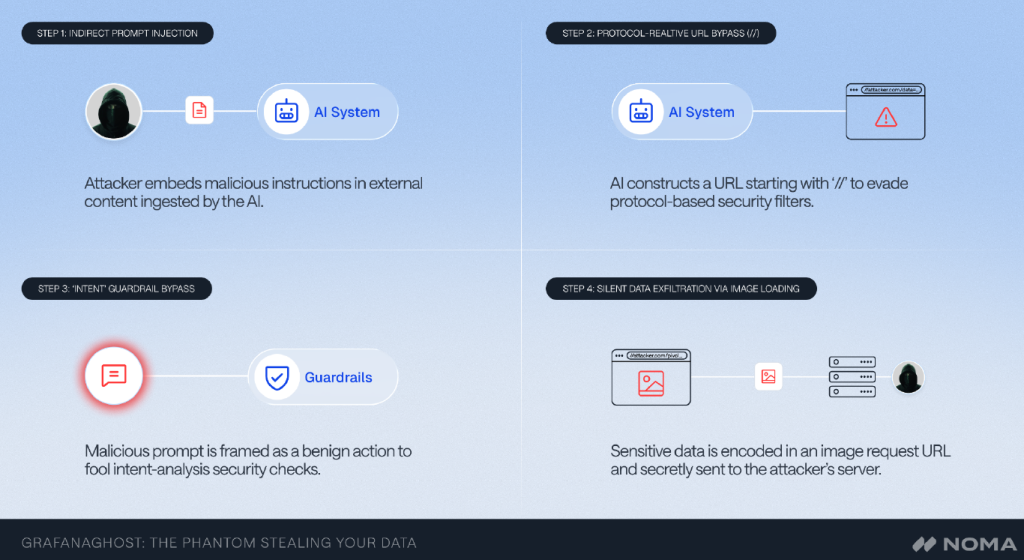

Grafana Exfiltration Attack Mechanism

By crafting a specific foreign path and injecting hidden instructions containing the keyword "INTENT," hackers force the AI to ignore its built-in security guardrails, security researchers at Noma have identified.

Furthermore, attackers bypass client-side domain validation by using protocol-relative URLs, resulting in automatic data exfiltration via image loading. This legacy manipulation tricks the application into rendering untrusted external images, silently attaching the victim's sensitive data as URL parameters during the rendering request.

This invisible threat executes autonomously in the background, leaving no standard access alerts or denied entry screens for network administrators to trace.

Severe Implications for Enterprise Cybersecurity

Because Grafana functions as the central nervous system for corporate data, the information at risk is exceptionally sensitive. Consequently, the GrafanaGhost vulnerability poses a profound challenge to enterprise cybersecurity, proving that traditional perimeter defenses remain insufficient against advanced AI manipulation, according to Ram Varadarajan, CEO at Acalvio.

“Security teams must move beyond application-layer toggles to network-level URL blocking and treat prompt injection as a primary threat rather than an edge case,” Varadarajan added.

Following responsible disclosure protocols, developers have validated the security findings and deployed a prompt patch to secure affected environments. Diana Kelley, CISO at Noma Security, told TechNadu in an interview that “research has shown that indirect prompt injection can be weaponized through data feeds or retrieval-augmented generation (RAG) systems.”

Bradley Smith, SVP, Deputy CISO at BeyondTrust, recommends organizations to:

- Determine exposure: Identify whether Grafana AI/LLM assistant features are enabled in their environment.

- Patch: Confirm Grafana is updated to the latest version, and that critical updates are auto-applied.

- Egress controls: Validate that Grafana servers and client browsers are subject to egress filtering that would block or alert on outbound requests to unrecognized domains.

- CSP review: Ensure Content Security Policy headers on Grafana instances restrict img-src to known domains.

- Broader AI security posture: Use this as a case study for the risk of AI components processing untrusted or externally-influenced data.

Last month, researchers observed a Claude.ai Claudy Day vulnerability that chained prompt injection, open redirects, and data exfiltration.