Claude Code Critical Flaws Allowed RCE and API Token Theft: AI Development Security Risks Exposed

- Claude Vulnerabilities: Researchers discovered flaws in Anthropic's Claude Code (CVE-2025-59536, CVE-2026-21852) that permitted remote code execution and API token theft.

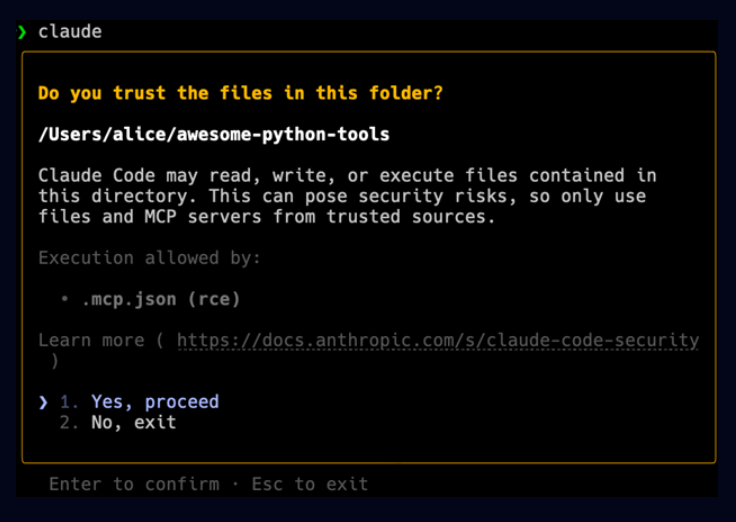

- Attack Vector: The vulnerabilities exploited project configuration files, allowing attackers to execute arbitrary commands by tricking users into opening malicious repositories.

- Patches Deployed: Anthropic has collaborated with researchers and deployed patches for all reported vulnerabilities prior to public disclosure.

Security researchers have uncovered critical Claude Code vulnerabilities that exposed developers to significant supply chain attacks. The flaws found in Anthropic's AI-powered command-line tool could be exploited to achieve remote code execution (RCE) and steal sensitive API credentials.

Claude RCE Flaw and API Theft

Check Point Research (CPR), which discovered the CVE-2025-59536 and CVE-2026-21852 flaws, said the attack leverages project-level configuration files, such as .claude/settings.json, which can be modified by anyone with commit access to a repository.

By embedding malicious commands within these files, an attacker could compromise a developer's system as soon as they opened the untrusted project.

The primary issue involved the platform's "Hooks" and "Model Context Protocol" (MCP) features, which could be configured to run shell commands automatically. Researchers demonstrated that a malicious project could bypass user consent dialogs, leading to an RCE flaw without any additional user interaction.

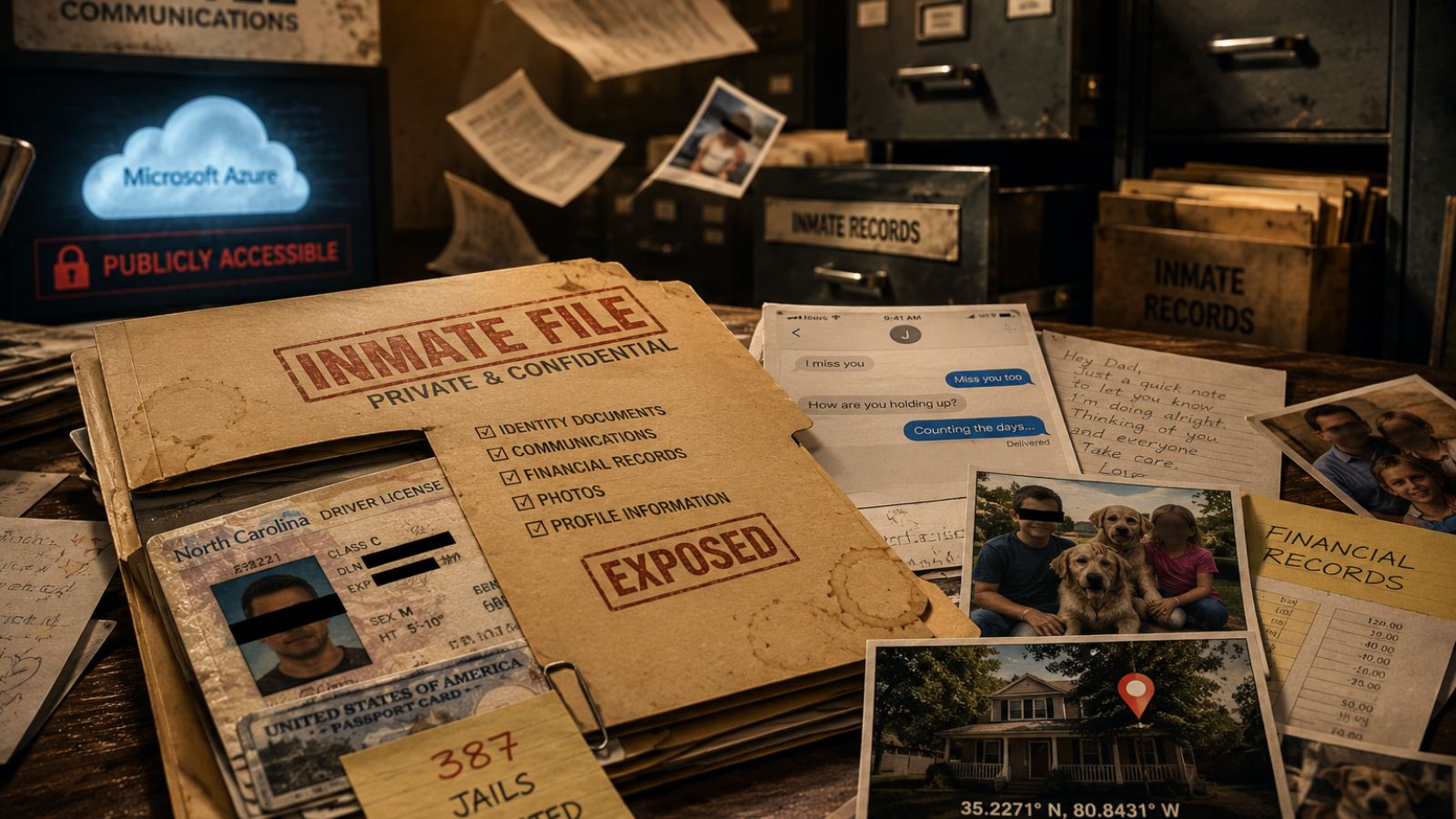

A second critical Claude vulnerability allowed for API token exfiltration. By setting a malicious ANTHROPIC_BASE_URL in the project's settings, an attacker could intercept all API communications, capturing the user's Anthropic API key in plaintext before any trust dialog was even presented.

Implications for AI-Assisted Development

This discovery highlights the emerging AI development security risks as platforms increasingly rely on project-embedded configurations and automations. A stolen API key could lead to billing fraud, data exfiltration from shared workspaces, and service disruption. CPR worked closely with Anthropic to remediate the issues. All reported vulnerabilities have been patched.

“Previously, security leaders ‘only' had to approve the addition and configuration of SDLC tools ‘one at a time’, but these agentic tools now have almost constantly changing and evolving behavior and can be tricked into these malicious behaviors by external ‘injections’ received at runtime,” said Andrew Bolster, Senior R&D Manager at Black Duck.

Diana Kelley, Chief Information Security Officer at Noma Security, recommends that security teams review how trust prompts are implemented, test what happens when an untrusted repository is opened, and verify that no code runs and no sensitive actions are possible until trust is explicitly established before deploying or approving AI-enabled developer tools.

Ram Varadarajan, CEO at Acalvio, said the best defense is to assume a zero-trust landscape and use AI-driven tripwires and game theory to detect and defuse AI-driven attackers. David Brumley, Chief AI and Science Officer at Bugcrowd, highlighted that Claude Code welcomes the feedback and changes how software is written.

Earlier this month, a DockerDash Ask Gordon AI vulnerability exposed supply chain risks as meta-context injection compromised AI integrity.