Jack of All Trades, Master of None: AI Excels Detection and Triage but Relies on Humans to Gauge Intent

In this interview, Norman Gottschalk, Global CIO & CISO at Visionet Systems, explains how generative comes to the rescue of defenders and attackers, filtering noise and scanning for vulnerabilities for both.

With more than a decade of leading technology and security programs at Visionet, Gottschalk has overseen infrastructure, security, and advanced technology initiatives across the organization.

The dual-edged sword accelerates malware adaptation, shrinking attack lifecycles and weakening signature-based defenses.

He outlines guardrails needed to keep AI effective without becoming reckless, a balance many CISOs now face. Read on to learn how AI reduced detection and investigation time from hours to minutes during a real cloud incident.

Vishwa: What specific types of threats do you see generative AI helping detect faster in real environments?

Norman: Generative AI is making the largest impact in areas where patterns have always existed but were buried under massive amounts of noise. A good example is identity-based anomalies—things like lateral movement through compromised credentials, privilege escalation, or unusual access paths.

Traditionally, these signals were scattered and weak, but AI can correlate dozens of them and say, “This looks like early-stage credential misuse.”

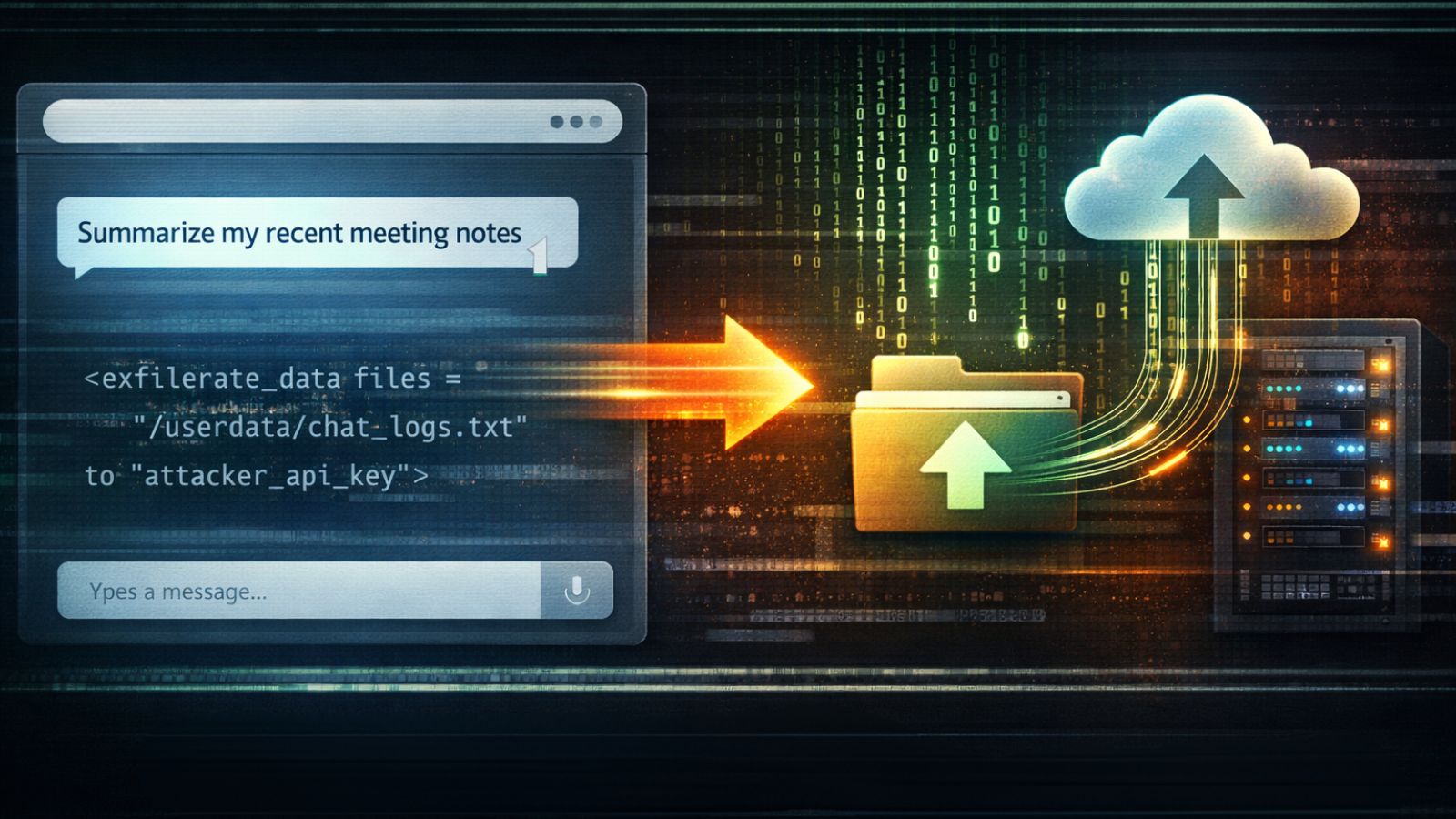

It’s also proving invaluable in spotting insider-driven data exfiltration. Subtle behaviors such as unusual file access, suspicious downloads, or off-hours data transfers often slip past rule-based systems, but AI picks up those deviations.

And then there’s cloud misconfiguration exploitation—AI can analyze infrastructure drift in real time and flag exploitable gaps before attackers do.

In short, AI shines wherever there’s high event volume and the need to aggregate weak signals into a meaningful picture.

Vishwa: From your perspective, which attack techniques are evolving the quickest because of AI automation?

Norman: Phishing and social engineering are evolving faster than anything else.

- With generative AI, even novices can now craft well-formed phishing campaigns in virtually any language, and they’re grammatically correct.

- That’s a huge shift—what used to require skill and time is now accessible to anyone with a prompt.

- Attackers are also using AI to create hyper-personalized pretexting, flawless language, and even voice cloning.

- Beyond that, we’re seeing AI accelerate vulnerability research, helping attackers identify exploitable code paths at scale.

- Malware adaptation is another area

- polymorphism is happening so quickly that signature-based defenses are becoming almost irrelevant.

- And in the cloud, attackers are automating entire playbooks

- chaining reconnaissance,

- privilege escalation, and

- data exfiltration with minimal human input.

Vishwa: Can you share one concrete example where AI reduced detection time inside a cloud environment you worked on?

Norman: Certainly. In one cloud environment, we were dealing with a series of low-confidence alerts that, on their own, didn’t warrant escalation.

Traditionally, an analyst would spend hours piecing these signals together to determine if they formed a real threat.

We changed that by passing the entire incident chain—all related events and context—into a GenAI model. Instead of just correlating data, the model synthesized the activity into a clear narrative and provided a recommended response for a Level 1 SOC engineer.

This approach cut investigation time from hours down to minutes, allowing the team to act quickly and focus on the actual risks rather than getting bogged down in manual triage. It was a significant efficiency gain and a great example of how GenAI can transform operational workflows.

Vishwa: Where do you see AI governance breaking down inside organizations today, based on your experience?

Norman: Governance usually breaks down in three places.

- First, ownership is ambiguous

- nobody’s sure whether oversight belongs to IT, security, legal, or the business unit deploying the AI.

- Second, shadow AI usage is rampant.

- Teams plug AI tools into workflows or datasets without notifying security, bypassing basic risk assessments.

- And third, organizations forget that models aren’t static.

- They drift.

- Without ongoing validation, decisions can become unsafe or inaccurate.

- They drift.

The common thread is that companies treat AI governance as a one-time setup instead of a continuous discipline.

Vishwa: What is one area where defenders mistakenly overestimate AI’s capabilities?

For example,

- a large download might look like data exfiltration—or it could be a sanctioned migration.

- A privilege escalation could signal a threat actor—or simply a legitimate automation process.

AI provides probability scores, not the business context required for confident decision-making.

That’s why human-in-the-loop oversight remains critical. AI can accelerate detection and triage, but final judgment calls—especially those that impact operations or risk posture—must involve human review.

Overreliance on AI without that safeguard leads to alert fatigue or, worse, false confidence that puts the organization at risk.

Vishwa: Which security tasks should never be automated using AI, and why?

Norman: There are several areas where full automation introduces unacceptable risk.

Final decision-making in incident containment such as:

- disabling accounts,

- isolating machines, or

- shutting down production workloads—must always involve a human in the loop.

- AI can recommend actions, but irreversible steps require accountability and judgment.

Regulatory and legal interpretations are another critical area. AI hallucinations or misinterpretations can create significant liabilities. Risk acceptance is also off-limits for automation; defining what constitutes “acceptable risk” is a business decision, not an algorithmic one.

What’s more, we’re now seeing legal requirements from customers mandating indemnification if AI is used without human oversight for any decision-making.

This underscores the growing consensus that while automation is powerful, autonomy without human validation is where risk skyrockets—not just operationally, but contractually and legally.

Vishwa: What practical guardrails should companies implement before rolling out AI in threat detection pipelines?

Norman: Before deploying AI, companies need strong guardrails.

- Start with strict data access boundaries

- AI should only see what it’s authorized to see.

- Then, build human-in-the-loop escalation for high-risk decisions.

- Models must be auditable so the SOC can understand why a decision was made.

- Continuous drift monitoring is essential to keep outputs aligned with reality.

- Red-team the AI itself—test for adversarial manipulation or poisoning.

- And finally, have fallback procedures so operations remain safe if the AI fails or behaves unexpectedly.

These measures keep AI powerful but not reckless, which is the balance every CISO is chasing right now.