Cloud Security Automation Still Depends on Human Judgment

Question: If security systems start learning an organization’s environment and adapting in real time, how reliable is that in practice, and where would teams need to intervene and validate decisions?

Loris Degioanni, CTO and Founder of Sysdig

The key to reliability when it comes to security that learns and adapts in real time is understanding what a system is learning from, and how tightly that learning is governed.

In most modern environments, so-called adaptive security systems have been far less dynamic than vendors advertise. They have been largely based on:

- pattern-matching with historical data,

- tuning thresholds, and

- flagging deviations from baselines built days, weeks, or months ago.

- That’s useful, of course, but it breaks down quickly in modern infrastructure.

In cloud-native environments where assets are ephemeral and identities shift constantly, last week’s baseline is already outdated. When systems “adapt” via stale or low-fidelity data, they don’t become intelligent. Ultimately, they just become wrong.

That’s the core gap between advertised capabilities and “rubber meets the road” execution. The industry has over-indexed on detection “learning,” but underinvested in the quality of the underlying signals they’re gathering. Real reliability comes from deterministic, runtime-grounded telemetry. That means understanding what’s actually running, what’s exposed, and what behavior is anomalous right now.

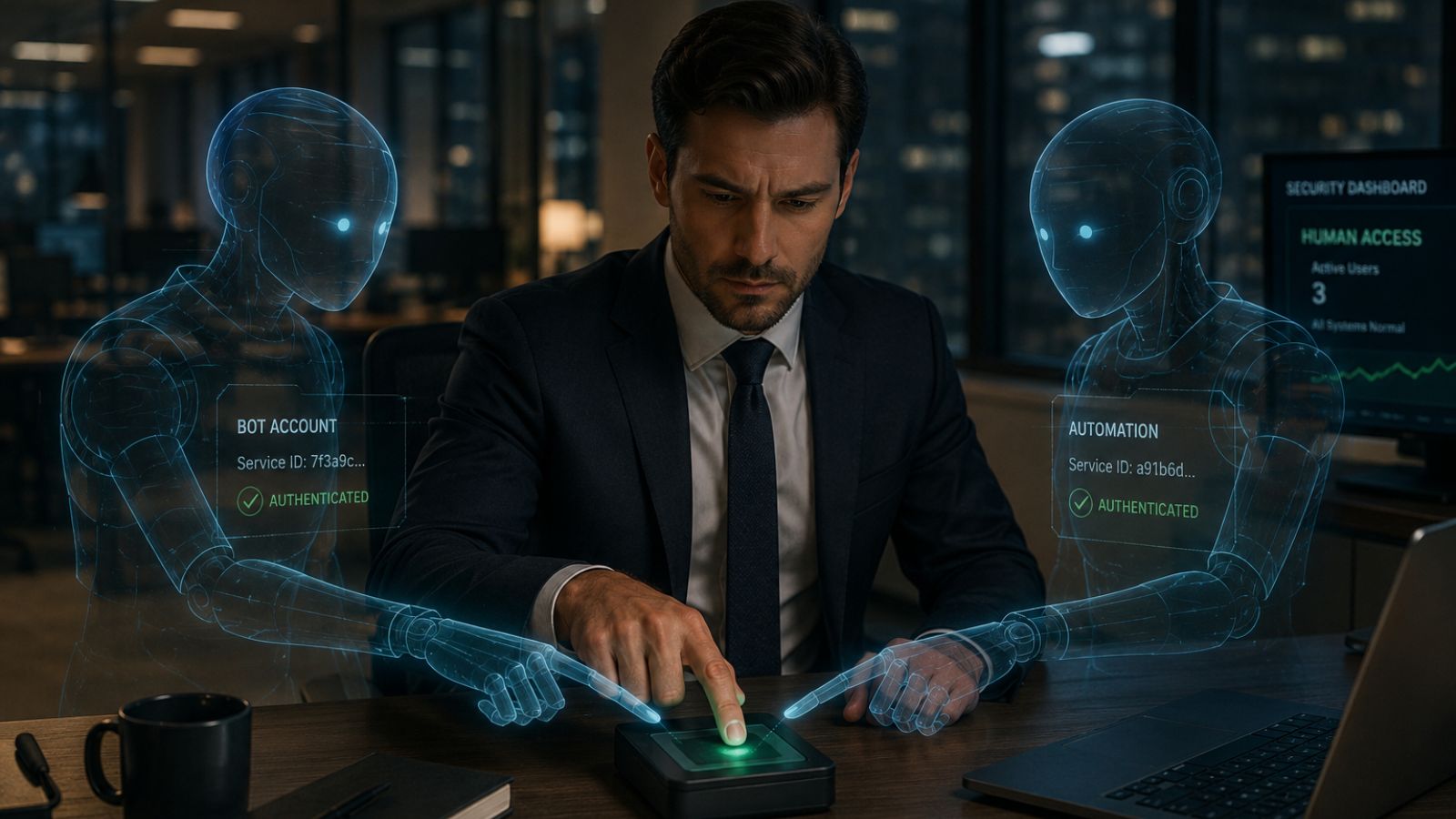

However, even with strong data, headless cloud security is not a fully hands-off model. With a domain as complex as cybersecurity, humans will always have a role to play. What’s changing in practice is not the removal of people, but the evolution of their position in the system.

Here’s what I mean: high-performing teams are already shifting away from manual triage and reactive workflows. They’re letting automated systems handle volume and velocity. This includes things like correlating signals, resolving low-risk issues, and enforcing known policies. They’ve become very deliberate, though, about where autonomy stops.

The model that works is risk-aligned:

- Low-risk, high-confidence actions are automated

- Higher-impact or ambiguous decisions require human oversight or approval

- Strategic choices, like risk tolerance, policy, and priorities, remain human-defined

This is where many current approaches fall short. Vendors are racing to increase autonomy, but they’re underbuilding the governance layer that makes that autonomy both safe and reliable.

In practice, teams need clear answers to very basic questions. What did the system do? Why did it do it? What is it allowed to do? How do we override or refine that behavior?

With Sysdig Headless Cloud Security, explainability, auditability, and policy boundaries are built into the control plane. Without them, you don’t have real adaptive security. Instead, you have a black box making operational decisions about your environment.

The real shift here is that humans move from operators to orchestrators. Their role becomes defining intent (“What does good look like here?”), setting guardrails (“What should systems be allowed to do autonomously?”), and auditing outcomes. The work won’t be about chasing alerts, but about shaping how systems behave and improve over time.

Even here, there are still hard limitations. Adaptive systems struggle with novel attack patterns, ambiguous context, and anything that requires business-level judgment. They also inherit biases and gaps from the data on which they’re trained.

So what should organizations do differently with headless security in mind? I’d say there are two important things they can do today:

- Prioritize data quality over model complexity. If your signals aren’t real-time and reliable, no amount of “learning” will fix that.

- Invest in governance as seriously as detection. Define clear policy boundaries, implement approval workflows, and ensure every automated action is both explainable and auditable.

Headless, adaptive security becomes reliable when it’s appropriately governed, continuously validated, and built on signals that reflect reality. Without that, it’s just automation layered on top of blind spots.