Claude.ai: The Claudy Day Vulnerability Chains Prompt Injection, Open Redirects, and Data Exfiltration

- Exploit Chain Discovered: Researchers identified Claude.ai vulnerabilities that combine AI prompt injection, open redirects, and API flaws into a seamless attack pipeline.

- Silent Data Theft: Attackers leverage these exploits to execute severe data exfiltration risks, silently extracting sensitive conversation history from default user sessions.

- Patch Status Updated: Anthropic successfully patched the critical AI prompt injection flaw and continues to address the remaining infrastructure vulnerabilities.

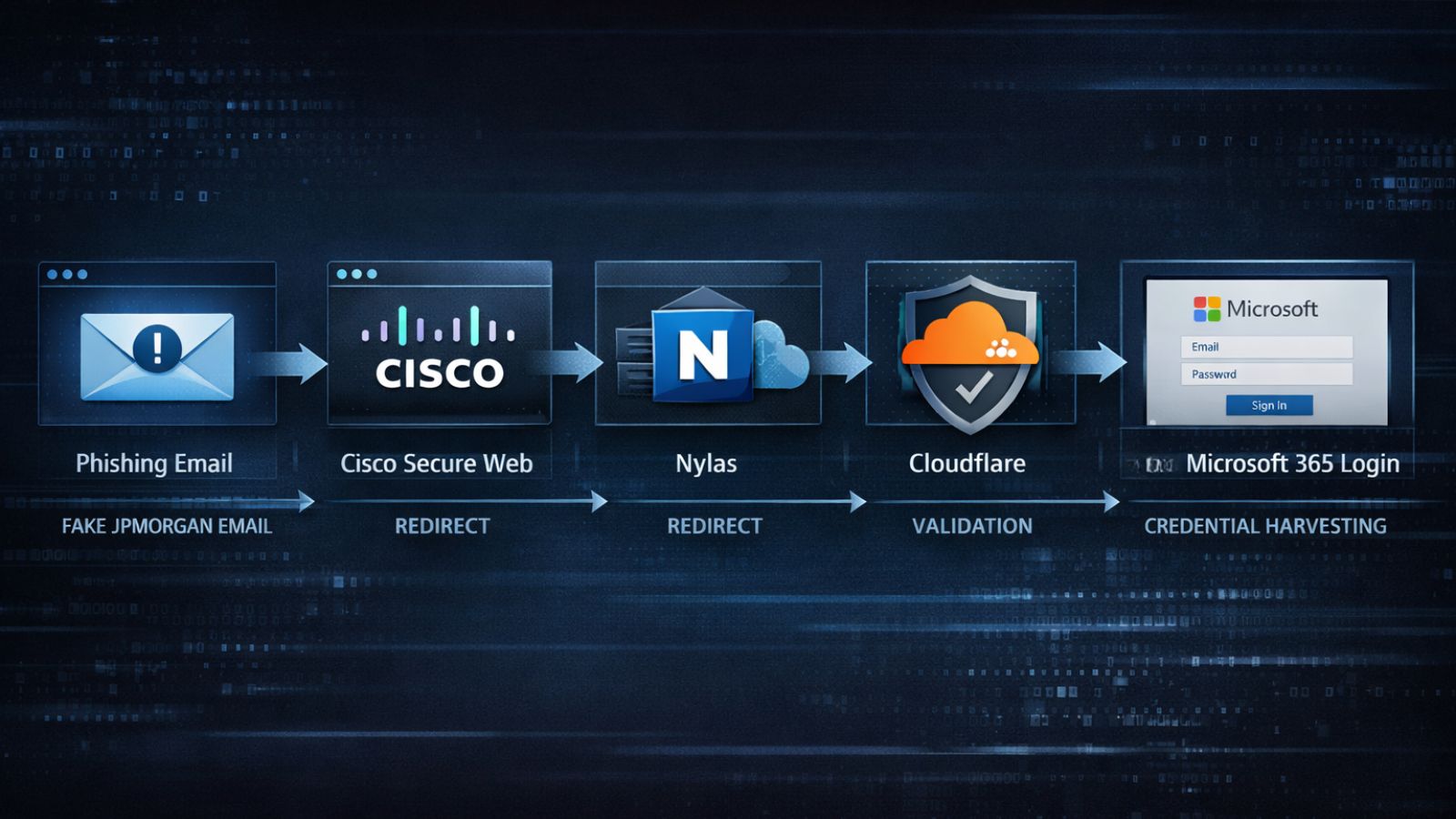

Researchers identified an exploit chain dubbed "Claudy Day" affecting Claude.ai that chains three vulnerabilities: invisible prompt injection via URL parameters, an open redirect on claude.com, and data exfiltration via the Anthropic Files API.

Claude Attack Chain

The attack can leverage an open redirect on the platform domain, which may be combined with search engine advertisements, security researchers at Oasis Security recently disclosed. When a victim clicks the link and submits the prompt, hidden instructions embedded in URL parameters are processed by the system.

These hidden prompts can include attacker-controlled API keys that allow the system to package sensitive user conversation history and upload it to an attacker-controlled Anthropic account via the Files API, without requiring external tools or additional integrations.

Has the Claude.ai Vulnerability Been Fixed?

Yes. Anthropic has fixed the prompt injection vulnerability and is mitigating the remaining structural issues. Yet, organizations must proactively audit their connected agent integrations.

Claude AI Flaw Implications

In a standard, out-of-the-box session, the AI agent can access conversation history and memory, which may include sensitive user information. If a user enables enterprise integrations, specialized tools, or Model Context Protocol (MCP) servers, the potential blast radius expands exponentially.

Threat actors can command the compromised agent to read internal files, interact with connected application programming interfaces, and transmit messages autonomously. Organizations should:

- Inventory all AI agents and their integrations,

- Gain visibility into AI agent usage,

- Educate users that shared links and pre-filled prompts can contain hidden instructions.

- Establish governance for AI agent identities

“Security leaders have a responsibility to prevent their AI assistants from being ‘socially engineered’ into disclosing sensitive or protected information or granting access,” said Andrew Bolster, Senior R&D Manager at Black Duck.

Saumitra Das, Vice President of Engineering at Qualys, highlighted that the prompt itself is now an attack surface, adding that developers and users are increasingly "dangerously skipping permission checks" to avoid interrupting the agent.

Last month, Claude Code critical flaws allowed RCE and API token theft. PromptArmor in January disclosed an Anthropic Cowork AI vulnerability that allowed file exfiltration via prompt injection without additional user approval, and in July 2025, a critical remote code execution vulnerability was found in the Anthropic MCP Inspector.