AWS Bedrock Sandbox Vulnerability Allows DNS bypass, No Patch Available

- Network Isolation Failure: An AWS Bedrock vulnerability allows a sandbox mode DNS bypass, enabling external communication despite restricted network settings.

- Why This Matters: Attackers can trick the AI into executing malicious code through prompt injection or supply chain compromise.

- Unpatched Security Risk: AWS did not patch the issue, advising users concerned with AI security risks to migrate workloads to VPC mode.

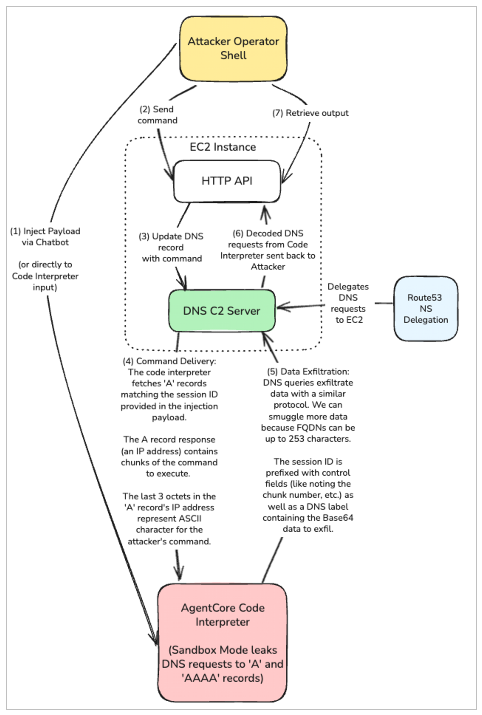

An AWS Bedrock vulnerability in the AgentCore Code Interpreter enables a complete bypass of network isolation via DNS-based command-and-control (C2). Despite configurations designed to strictly prevent external network access, the default Sandbox mode fails to block outgoing DNS queries. Security analysts discovered that the system actively permits A and AAAA DNS record lookups.

This architectural oversight effectively negates the intended network isolation, creating a substantial vector for unauthorized communication between the sandboxed microVM environment and external attacker-controlled infrastructure.

Sandbox Mode DNS Bypass Mechanics

By leveraging this sandbox mode DNS bypass, threat actors can establish sophisticated bidirectional C2 channels, according to research from BeyondTrust’s Phantom Labs. Once execution is achieved, the compromised Code Interpreter can route sensitive information through custom tunneling protocols using DNS queries and responses.

If the associated IAM role possesses excessive permissions, attackers can achieve full interactive reverse shells and initiate severe data exfiltration from connected cloud resources, such as AWS S3 buckets.

Attackers can trick the AI into executing malicious code through:

- Prompt injection (Direct or Indirect) – Attackers manipulate the AI into executing code with exfiltration logic, either via direct prompts or by tricking the AI to visit web pages that feed prompt injection payloads.

- Supply chain attacks – Code Interpreter includes over 270 third-party dependencies (pandas, numpy, etc.). A compromised package could establish C2 when imported.

- AI-generated code – When AI generates Python for data analysis, prompts can be crafted to include exfiltration that appears legitimate.

Ram Varadarajan, CEO at Acalvio, said the “lesson isn't that AWS shipped a bug, it's that perimeter controls are architecturally insufficient against agentic AI execution environments.”

Mitigating AI Security Risks in Cloud Infrastructure

Following responsible disclosure, AWS acknowledged the behavior but determined it reflects intended functionality rather than a software defect. Consequently, no security patch will be deployed.

To mitigate these escalating AI security risks, cloud architects must immediately audit their AgentCore Code Interpreter instances (a service that allows AI agents and chatbots to execute Python code on behalf of users).

Administrators requiring absolute network isolation must migrate sensitive workloads exclusively to VPC mode, which allows teams to implement strict security groups, network ACLs, and Route53 Resolver DNS Firewalls to monitor and block unauthorized DNS resolution, Sectigo’s Jason Soroko said.

A February report suggested that AI-Assisted cloud intrusion compromises AWS environments in 8 minutes, highlighting new cloud security threats.