Truepic VS Deepfake; a War Against Fake News

- Truepic, an image verifier, raised over $8 million to facilitate users to detect fake posts from originals on the internet.

- Truepic is an independent project which is first launching on r/iAMA subreddit, without any official partnership with Reddit.

- The utility of this product will later extend to dating apps, e-commerce websites, and other platforms.

The number one problem in today’s moderately free internet world is the authenticity of the news items, and the photos attached to them. Internet, especially social media platforms are full of bogus pieces with photoshopped evidence. Until now, it was impossibly hard to detect the fake images from the authentic ones. This is changing. Reportedly, Truepic, a startup focused on authenticating photos has raised over $8 million for its product.

Truepic is primarily designed to verify photos on the internet. This app features a camera that captures photos and adds watermark URL. It also saves the original copy of the photo for the viewers to compare. This would help viewers to check if the photo has been altered or not.

The startup has announced that its first main deployment would be on Reddit to verify the users before they start their Ask Me Anything (AMA) Question and Answers. In an AMA session, a user, usually a celebrity, advertises on the platform to get questioned on anything at certain time slot.

It is also clear that there is no official partnership between Truepic and Reddit and it is an independent project. Apart from Reddit, the utility of this product goes far beyond social media, and many platforms could use this photo verifier, especially dating apps for their profiles, e-commerce listings, matrimonial websites, document sharing apps and many more.

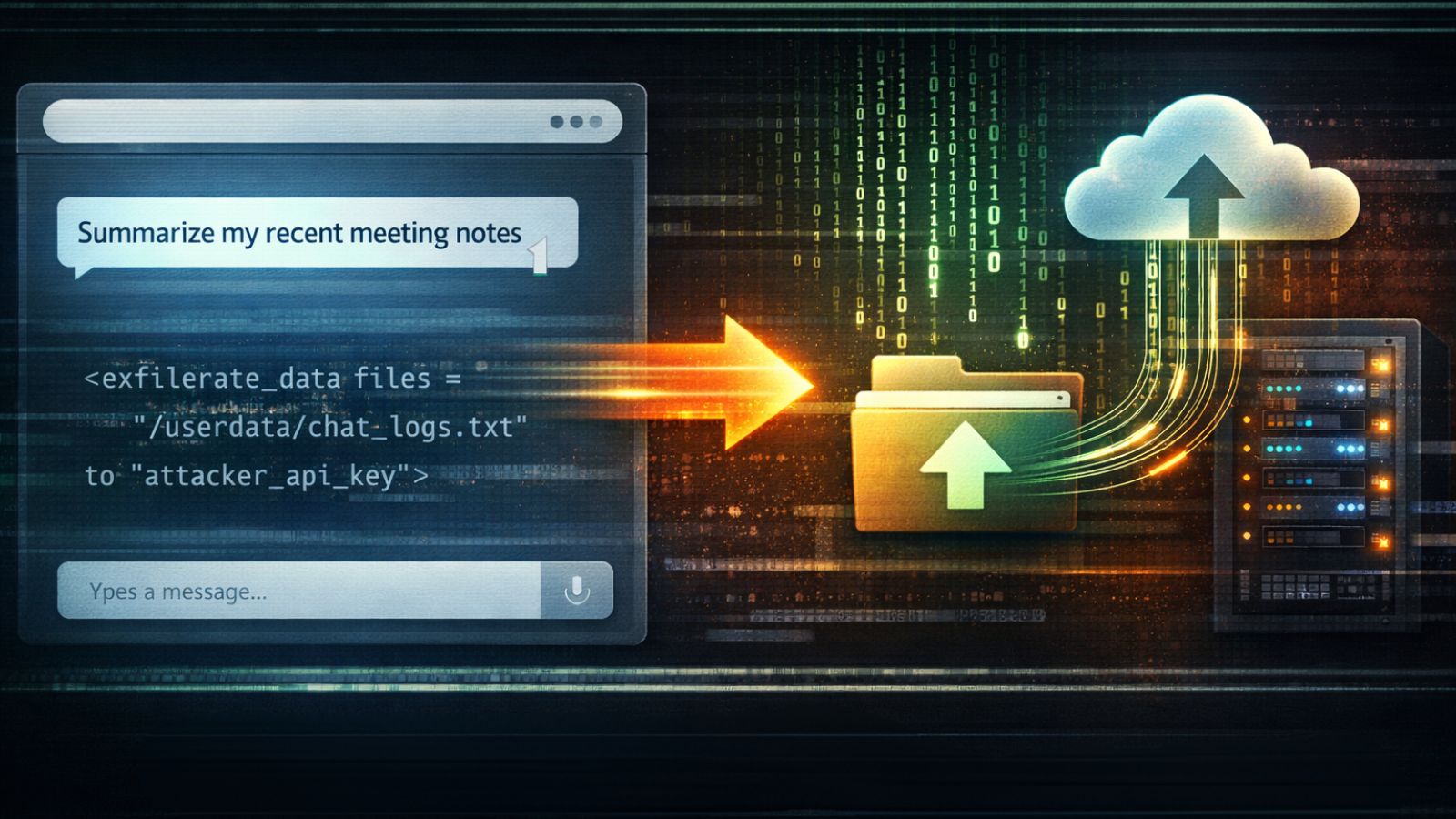

Currently, the biggest challenge for the app is to detect Deepfakes created by artificial intelligence. These are the kind of photos, also videos, in which a person’s face is altered by someone else’s face by an AI. A lot of industries are known to use this technology, starting with Pornography in which a celebrity’s face is placed over an adult film star’s body. The similar technique is used with political and religious extremists to play with different faces for their own personal interests.

The necessity of a similar product has always been there in the market and being able to get more than $8 million funding is a sign that proves the point. The founder and COO of Truepic said that ‘The world needs this technology to separate wrong from the right. It’s high time we take control of the digital imagery abuse.’

The users of r/iAMA recommend using the app to snap a self-portrait with a handwritten sign with their name and date on it. Brian Lynch, Reddit AMA’s moderator says that Truepic is a perfect tool for ever-evolving social constructs on the internet and privacy laws.

Today Internet has become a dumpster of recycled information. Until now, fraudulent posts have enjoyed the incapabilities of users to differentiate between fake and original. Hopefully, Truepic will fix some of these issues.

Which is the biggest news which you later found was fake? Do let us know in the comment section. Also, join us on our TechNadu’s Facebook page and Twitter handle for instant updates.