The Psychology Behind Phishing, Digital Coercion, and Scam Economy

- Cyber hygiene is no longer just about systems; it is about building habits and safeguards that hold up at the moment.

- The hardest parts of cybercrime to communicate to a general audience are not technical, but human.

- Attackers trigger familiar cues such as authority, urgency, fear, reward, and emotional connection, often within everyday work.

- Gender is one of many contextual signals used for persuasion and emotional leverage.

- Victims engaging with or complying with demands often escalates risk rather than resolving the situation.

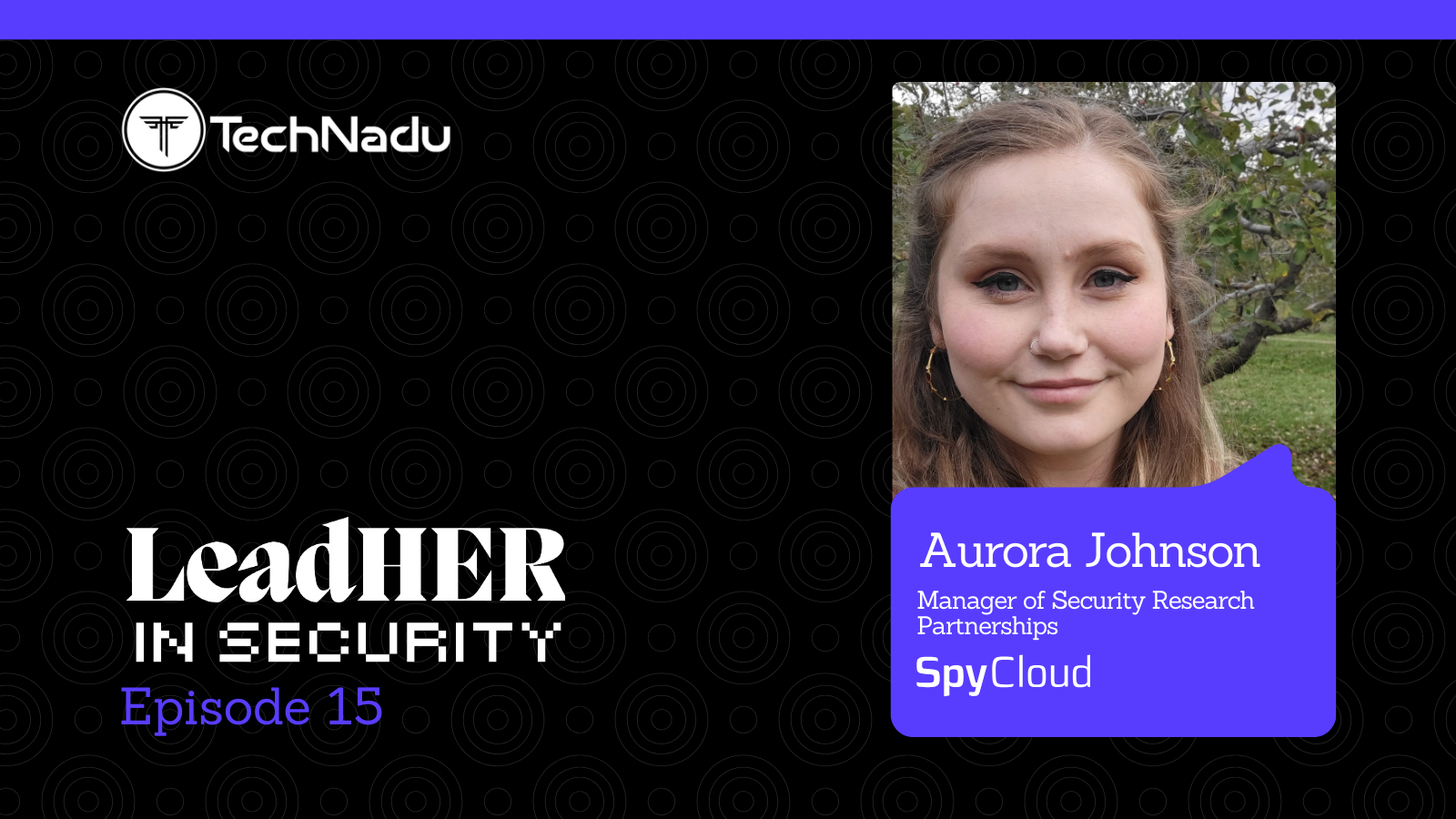

As part of our International Women’s Day editorial series, we speak with Bithika Nathan, Author and Founder of Dr. Phish Labs. She is building Dr. Phish Labs, a cybersecurity awareness ecosystem that uses storytelling, education, and behavioral insight to make digital safety a second nature.

Nathan believes cybercrime succeeds at the intersection of technical gaps and human behavior, especially under urgency, pressure, or misplaced trust. She explains that attackers target behavior, responsiveness, and access, not individuals, operating at scale and personalizing only at the final moment.

Certain categories, such as romance scams, sextortion, deepfake abuse, cyberstalking, and doxing, disproportionately affect women through coercion and reputational harm.

She emphasizes that victims are not at fault, and that preserving evidence, slowing down under pressure, and seeking support are critical first steps.

Vishwa: What has most influenced how you think about cyber hygiene today?

Bithika: What has most influenced how I think about cyber hygiene today is observing how incidents actually unfold in the real world. Technical vulnerabilities still matter, but they are rarely the deciding factor on their own. In practice, cybercrime succeeds at the intersection of technical gaps and human behavior, when normal actions are taken under urgency, pressure, or misplaced trust.

That reality has reshaped cyber hygiene from a checklist of technical rules into a behavioral discipline: protecting identity, reducing risky moments, and designing practices that hold up when people are busy or distracted. Modern cyber hygiene is no longer just about securing systems; it is about building habits and safeguards that hold up at the moments when they matter most.

Vishwa: What aspects of cybercrime are hardest to communicate accurately to a general audience?

Bithika: The hardest aspects of cybercrime to communicate accurately to a general audience are not technical, but human. Many people still imagine cybercrime as “hacking”, a skilled attacker breaking into systems.

In reality, most modern attacks succeed through persuasion, timing, and psychological pressure. Criminals do not choose victims individually; they operate at scale, scanning for opportunity and personalizing only at the final moment.

This makes cybercrime feel random, leading to the common question, “Why would they target me?” The answer is that attackers target behavior, responsiveness, trust, urgency, and access, not people.

Another challenge is explaining how humans perceive risk. We are not wired to recognize threats that feel unlikely but occur repeatedly at scale. We respond to visible, immediate danger, not risks that are invisible and spread out over time. Attackers exploit this gap by triggering familiar cues such as authority, urgency, fear, reward, and emotional connection, often within everyday work or personal communication.

It is also widely misunderstood that AI represents a new category of cybercrime. In practice, AI mainly sharpens existing tactics, making them faster and more convincing.

The core problem remains social engineering combined with stolen access.

At its core, cybercrime is a business model built on scaling trust and identity, making it difficult to explain not because it is technically complex, but because it behaves in ways people do not intuitively expect.

Vishwa: Are there cybercrime categories where women are disproportionately affected?

Bithika: Yes and No. Research supports both. There is evidence that certain cybercrime categories disproportionately affect women, particularly those built around coercion, reputational harm, and sustained psychological pressure. These include romance scams, sextortion, image-based and deepfake abuse, cyberstalking, doxing, and targeted online harassment.

In these cases, attackers rely on trust-building, social exposure, and long-term manipulation rather than direct financial access. By contrast, financially motivated attacks such as phishing, business email compromise, and investment fraud show little gender differentiation and are driven primarily by role, access, and opportunity.

These patterns align with differences in attacker tactics and objectives.

Vishwa: Do you think attackers tailor social engineering differently based on gender? What factors do threat actors appear to consider when selecting or profiling targets for phishing?

Bithika: Yes, Attackers tailor social engineering tactics based on gender, but not in isolation. Gender is one of many contextual signals used to optimize persuasion, tone, and emotional leverage rather than a primary determinant of victim selection.

Modern threat actors rely on AI-driven profiling that combines role, behavior, psychological triggers, digital footprint, and security posture. The most effective campaigns exploit predictability and trust, not demographics alone, making social engineering a behavioral risk problem rather than a purely technical one.

Vishwa: What message would you share with individuals who may be affected by cybercrime or are presently being extorted by a scammer?

Bithika: The first and most important point is that the person affected is the victim; self-blame and isolation only serve the attacker’s intent. Cyber extortion and related crimes rely on fear, urgency, and silence, and responsibility lies entirely with the offender. When targeted, the priority is to avoid acting under pressure.

Preserving evidence, messages, emails, screenshots, and related records is essential, as it supports investigation and informed response. Engaging with or complying with demands often escalates risk rather than resolving the situation. Incidents should be reported promptly to law enforcement or appropriate cybercrime units, which are equipped to handle such cases discreetly.

In parallel, securing accounts and limiting exposure with the help of a cybersecurity professional, along with legal guidance where appropriate, can reduce further harm. Seeking support is a practical step toward regaining control, not a sign of failure.

Vishwa: When you read about cyber incidents, what details do you seek more over others? In coverage of cyber incidents, what information do you wish were explained more clearly?

Bithika: When I read about a cyber incident, I don’t ignore the headline technical breach itself; it matters. But I slow down there. I want to understand what sat around it. The human choices. The system behaviors. The small moments that quietly shaped the outcome. The exploit is often the final move, not the opening one.

What interests me is how trust was established, how urgency crept in, how a decision felt reasonable at the time. I look at the workflow that encouraged speed over verification, the control that existed but was easy to step around, and the warning that blended into background noise.

These incidents rarely come from reckless behavior. They emerge from normal people operating inside systems that don’t always match how humans think, react, or prioritize under pressure.

When incident reporting captures those layers, the human context and the mechanism behind the breach, it stops being a post-mortem and becomes a lesson. Not just about what failed, but about what will fail again if nothing changes.

Vishwa: Which security practices do you believe have the greatest benefit in reducing cyber crime?

Bithika: When we talk about reducing cybercrime, it’s important not to oversimplify the problem. Some attacks are clearly technical in nature; unpatched systems, misconfigurations, or software vulnerabilities do play a role.

But in many real-world incidents, the deciding factor isn’t just the technology; it’s how people respond in the moment. From a human-layer perspective, cybercrime often succeeds when attackers tap into very normal human behaviors, responding quickly to urgency, trusting familiar names or authority, or trying to be helpful.

These aren’t flaws in people; they’re traits that make everyday work function smoothly. The challenge is that attackers are skilled at exploiting those traits under pressure. This is where practices like authentication apps and passkeys start to make sense beyond their technical value.

Authentication apps can introduce a pause and an opportunity for someone to notice that a request feels unexpected. Passkeys reduce the need for people to manage passwords altogether, reducing a common source of error and confusion. Neither approach is foolproof, but both help simplify decisions at moments when people are most likely to make mistakes.

At the same time, no authentication method fully protects against social engineering. As technical controls improve, attackers tend to adapt, shifting their focus toward persuasion, convincing someone to approve access, bypass a safeguard, or trust a request that “sounds right.”

That’s why awareness remains essential. People need a practical understanding of how manipulation works, what normal behavior looks like, and when it’s reasonable to slow down and verify. In practice, the most effective reduction in cybercrime comes from balance: solid technical controls paired with security awareness that respects human psychology.

When security is designed to work with how people think rather than expecting perfect behavior under pressure, it becomes far more resilient. That combination, applied consistently, is what makes cybercrime harder and less profitable over time.

Vishwa: From a prevention standpoint, where do indviduals most often misjudge phishing risk today?

Bithika: From a prevention standpoint, phishing risk is often misjudged because public expectations have not kept pace with how these attacks have evolved. Many people still rely on outdated assumptions about what phishing “looks like,” even as modern attacks are designed to appear familiar, routine, and credible.

The absence of obvious warning signs is no longer reassuring, particularly as generative AI has made polished, personalized messages commonplace. Urgency plays a central role in this misjudgment. Time-sensitive requests trigger an instinct to act quickly, and hesitation can feel risky in the moment.

When messages arrive at points of distraction or pressure, individuals are more likely to respond without reflection. At the same time, realism itself has become a weak signal of trust. Familiar language, recognizable voices, or convincing visuals increasingly create a false sense of legitimacy rather than assurance.

There is also a tendency to overestimate the protection offered by technical controls. Messages that pass filters or appear in trusted platforms are often assumed to be safe, shifting attention away from context and intent. Ultimately, these misjudgments are not about carelessness but about how human psychology interacts with pressure and familiarity.

Effective prevention depends on reinforcing simple verification habits and encouraging brief pauses, especially when requests feel routine, urgent, or slightly out of place.