Security Instinct in Cyber: Driving Systems Design With Fully Homomorphic Encryption at Scale

- Agarwal states that security failures are usually architectural, not algorithmic.

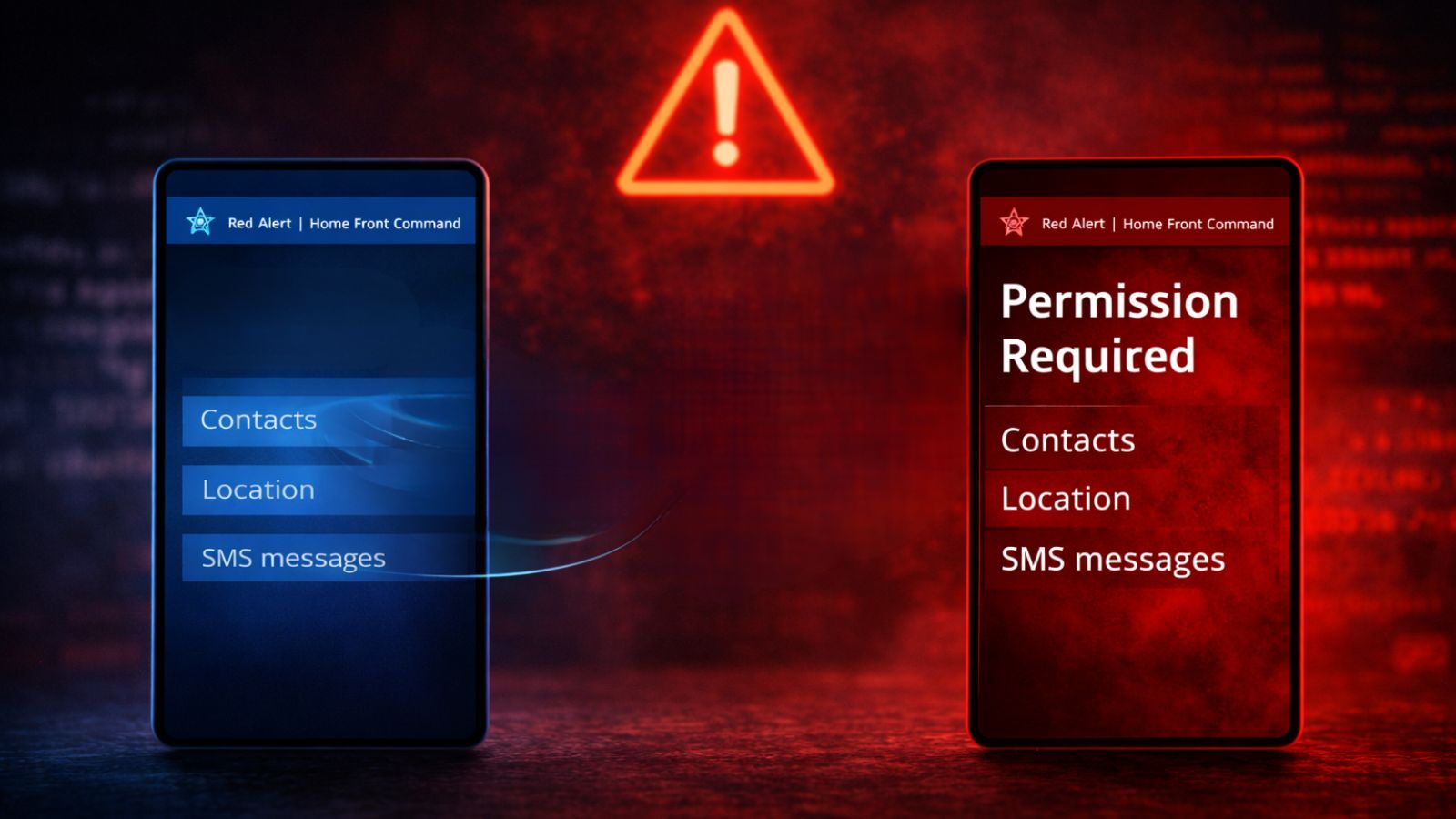

- Traditional encryption protects data when it is stored or transmitted, but it must be decrypted before any computation can take place.

- Fully Homomorphic Encryption allows computation directly on encrypted data, producing encrypted results that only the data owner can decrypt.

- CipherSonic focuses on making encrypted AI usable at scale, delivering near-plaintext performance.

- Women often leave cryptography because they cannot see how it connects to real-world problems.

Celebrating women in cybersecurity on International Women's Day, TechNadu highlights insights from Rashmi Agrawal, CTO & Co-Founder of CipherSonic Labs Inc., who discusses what it takes to make encrypted AI practical at the enterprise level.

Agrawal’s background spans leading fully homomorphic encryption research at Boston University, advancing encryption performance at Intel Labs, and now building secure cloud architectures for confidential LLM inference.

She focuses on closing the gap between cryptographic feasibility and production usability. Security failures are often architectural rather than algorithmic. She clarifies that FHE becomes meaningful only when integrated into real AI pipelines and explains how interdisciplinary pathways into security can strengthen the field.

Today, we bring perspectives about introducing students to not just applied security systems, but also to confidential computing, secure ML, and more.

Read further for Agarwal’s perspectives on mentorship, empowering women in the field of cybersecurity, and making way for the next generation of professionals with the right guidance.

Vishwa: As a woman co-founding a deep-tech security company, what challenges showed up in practice that you had not encountered in academic or research roles?

Rashmi: In research, credibility is mostly about technical output like papers, prototypes, results. In startups, credibility often has to be established before the technology is visible, especially in deep infrastructure domains like hardware security and acceleration.

I had to learn how to navigate investor and enterprise conversations where I was sometimes the only woman and the only hardware security architect in the room. I had to rapidly gain trust from my colleagues on system-level design decisions.

Another difference is that startups require constant tradeoffs between performance, security, and deployability and the focus is not just on correctness or getting the best possible performance. That shift from proving something that is best in the class to making something usable at scale for our customers was a major transition.

Vishwa: From your experience, what skills or habits could help women build long-term careers in cybersecurity?

Rashmi: I would emphasize three core strengths:

- Systems thinking: Security failures are usually architectural, not algorithmic. The ability to reason about trust boundaries, attack surfaces, and integration points across an entire stack is far more valuable than optimizing a single primitive.

- Comfort with hardware–software co-design: This is where performance and security tradeoffs get resolved. Many of the most meaningful breakthroughs in secure computing happen at the intersection of layers, not within one discipline.

- Technical communication: Being able to explain system risks, threat models, and tradeoffs clearly across engineering, product, and executive teams is critical. Security decisions rarely happen in isolation; they require alignment.

From a woman’s perspective, I would also add the importance of developing visible technical conviction. In highly technical security environments, women are sometimes challenged more frequently or expected to over-prove their expertise.

Building the habit of articulating your reasoning clearly, standing by well-founded technical decisions, and engaging confidently in high-stakes design discussions makes a significant difference over time.

Cybersecurity careers reward engineers who can reason across layers, from silicon to software to deployment environments, and who are willing to consistently show up with both depth and clarity.

Vishwa: What would you change about how early-career women are introduced to security and cryptography roles today?

Rashmi: We tend to over-index on abstract math and theory, and under-index on impactful systems work early on. Many talented women leave the field of cryptography because they cannot see how it connects to the real-world problems, or they don’t see people like themselves building those systems in industry.

I would introduce students sooner to applied security systems including confidential computing, secure ML, hardware roots of trust, post-quantum deployments, and pair that with mentorship from people shipping these technologies.

Representation matters. When early-career women see other women leading secure hardware teams, architecting encryption systems, or founding deep-tech companies, it expands what feels possible.

I would also normalize interdisciplinary entry points into security. Not everyone enters through pure mathematics, some come through hardware design, systems engineering, distributed systems, or AI infrastructure. Making those pathways more visible can retain a much broader and more diverse set of talents.

Seeing your work protect real users, and seeing leaders who look like you doing it, changes everything.

Vishwa: For readers who only know traditional encryption, what is the key difference with fully homomorphic encryption, where computation happens while data stays encrypted?

Rashmi: Traditional encryption protects data when it is stored or transmitted, but it must be decrypted before any computation can take place. This is because conventional encryption schemes do not support computation directly on encrypted data. As a result, when data is decrypted for processing, it becomes vulnerable to a wide range of attacks while in use.

Fully Homomorphic Encryption (FHE) changes that. It is based on post-quantum secure encryption scheme called lattice-based encryption. It allows computation directly on encrypted data, producing encrypted results that only the data owner can decrypt. The server never sees the raw data or the intermediate values.

From a systems perspective, FHE lets you redesign trust boundaries, so infrastructure no longer needs access to sensitive data. Cryptography and hardware can instead enforce that separation.

Vishwa: In practical deployments, what decides whether Fully Homomorphic Encryption (FHE) is usable, latency, memory, key size, or something else?

Rashmi: Performance (latency) matters, but the real constraint is system-level feasibility:

- How well does the encrypted workload integrate into existing workflows and real AI pipelines?

- Can the memory and bandwidth requirements of the FHE deployment stay within realistic limits?

- What kind of key management can be done locally on the client side?

- And how do you move these FHE generated large keys and ciphertexts around?

FHE stops being academic when you can batch efficiently, scale across hardware accelerators, and integrate with real deployment stacks — not just hit a single benchmark number.

Vishwa: When you talk about real-time inference on encrypted data, what is the realistic use case you think is close to production?

Rashmi: The closest production use cases are high-value inference workloads over regulated data, including fraud detection, financial risk scoring, healthcare diagnostics, and cross-organization analytics where data sharing is legally or competitively restricted.

These workloads do not require millisecond latency, but they require strong privacy guarantees and regulatory compliance, exactly where encrypted inference creates immediate value.

Vishwa: What changes when you move from academic FHE benchmarks to running encrypted inference inside an AI pipeline?

Rashmi: Everything becomes a systems problem. You are no longer optimizing a circuit, you are managing:

- Model architecture compatibility

- Memory layouts and data movement

- Accelerator scheduling

- Key management and trust domains

- Failure handling and observability

- Scalability

Cryptography is necessary, but not sufficient. Real deployment requires orchestration across hardware, software, and security infrastructure.

Vishwa: You’ve been involved in teaching and research environments. When people work on topics like FHE or post-quantum cryptography, what do they struggle with?

Rashmi: Most people struggle not with math itself, but with connecting cryptographic theory to real systems. In FHE and post-quantum cryptography, algorithms are only the starting point, the real complexity shows up in hardware constraints, memory behavior, noise growth, side channels, and performance tradeoffs.

Students and researchers often underestimate how much engineering judgment is required to move from a correct construction to something that runs efficiently, securely, and predictably on real hardware. The hardest part is learning to think across layers, from cryptographic guarantees down to microarchitecture and deployment realities, instead of treating crypto as a purely abstract problem.

Vishwa: As CTO and Co-Founder at CipherSonic, what is the specific gap you are trying to solve that existing privacy-preserving ML or encryption tools do not?

Rashmi: Most privacy-preserving ML solutions either sacrifice performance or rely on trusted execution environments that introduce operational and trust complexity.

CipherSonic focuses on making encrypted AI usable at scale, delivering near-plaintext performance using custom off-the-shelf hardware-accelerated encrypted inference. Our goal is to make encrypted compute a default deployment option, not a research exception. That’s why we are closing the gap between cryptographic feasibility and production usability.

Vishwa: What part of encrypted inference are you focusing on most: speed, deployment simplicity, trust model, or hardware integration? Please explain why.

Rashmi: The core focus is deployment simplicity and trust model, enabled by hardware acceleration. Speed matters, but encrypted computing can only become pervasive when enterprises can deploy it without changing their security assumptions or operational workflows.

Our goal is to make encrypted inference feel like a natural extension of modern AI infrastructure, that can be adopted easily by enterprises.