Meta AI Glasses Prompt UK ICO Investigation Over Privacy as Employees Review Intimate User Videos

- Official Inquiry: The UK's Information Commissioner's Office (ICO) is formally contacting Meta regarding significant data privacy concerns linked to its AI-powered smart glasses.

- Intimate Data Review: The inquiry follows reports that human contractors reviewed sensitive, user-generated video content, including intimate moments.

- Lack of Transparency: Users may be unaware that their recorded content is subject to human review, a detail described within extensive terms of service documents.

The United Kingdom Information Commissioner's Office (ICO) has initiated a formal inquiry into Meta's data handling practices, raising serious data privacy concerns about the company's new Meta AI smart glasses. The action follows a concerning report alleging that sensitive video content captured by users, including intimate and private situations, was viewed by third-party contractors in Kenya.

The core of the issue stems from the use of human reviewers to process data from AI wearable technology. The purpose of this human review was reportedly to improve the AI's interpretive capabilities.

Data Handling and AI Wearable Technology

A recent investigation by Swedish newspapers Svenska Dagbladet (SvD) and Göteborgs-Posten (GP) suggested that a Meta subcontractor based in Kenya sometimes reviews extremely sensitive videos captured by Meta AI Glasses.

This report has triggered a formal UK ICO investigation to determine whether Meta is fulfilling its obligations under U.K. data protection law, according to BBC News.

BBC reports Meta has stated that data is filtered to protect privacy, including the blurring of faces, but SvD and GP sources claim this process is not always effective. Reports suggest that contractors captured and analyzed extremely private moments, including recordings that revealed bank cards, users watching porn, or sexual moments.

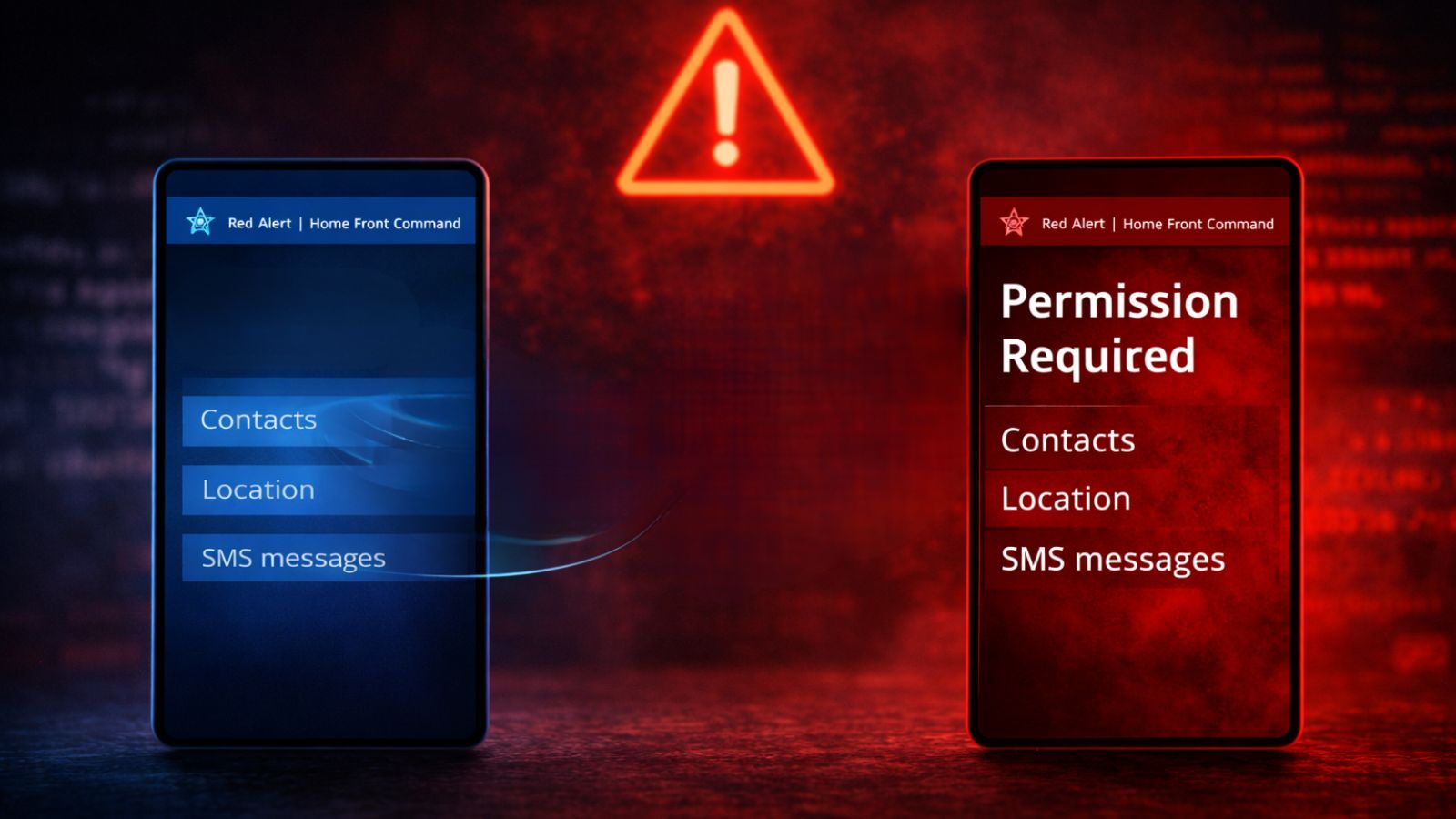

Broader Privacy Implications for AI Devices

As these devices become more integrated into daily life, service providers face increasing scrutiny to provide clear, transparent explanations of how personal data is collected, processed, and used.

While Meta's terms of service state that interactions may be subject to manual or automated review, users may not be fully aware of the extent of this human oversight. Also, users have deduced ways to obscure the glasses’ recording indicator light.

A 2024 report revealed that college students were able to use Meta Smart Glasses for real-time doxing.