European Commission Presents a Sweeping Online Content Control Package

- The EU Commission is looking to place strict regulations on all online platforms, especially the larger ones.

- The management systems for piracy and harmful content will be audited and overseen by third parties.

- The fines for those who don’t comply may go up to 6% of their annual turnover/income.

It’s been a busy couple of days for the European Commission, as the representatives have brought two legislative proposals forward, the Digital Services Act and the Digital Markets Act. The former proposes a set of strict moderation rules that will help in the fight against “illegal content online,” while the latter concerns pruning the power of “digital gatekeepers.”

An example of that would be Google or Apple depriving users of the option to uninstall apps that come preinstalled on their devices, etc. The scope is really wide, and both legislations have wide coverage and reach, so we’ll just focus on the first one.

All online platforms which provide hosting services will now be obliged to develop easy-to-use content take-down mechanisms that will work swiftly. Also, the notification and take-down system’s availability must be clearly communicated with third parties like copyright holders. Very large platforms (those with over 45 million registered users) will be required to provide even more detailed reporting to the authorities, explaining how they handle piracy, incoming take-down notices, provide stats, etc.

This rings a bell to YouTube and Facebook, where pirates can watch entire sports matches before any action is taken against the illegal stream. Large platforms will also be expected to act pro-actively to prevent abuse, cooperate closely with “trusted content flaggers,” and establish oversight on their risk-management measures by contracting independent auditors. Researchers and members of the European Board for Digital Services will also be given access to data on key platforms to scrutinize the associated processes.

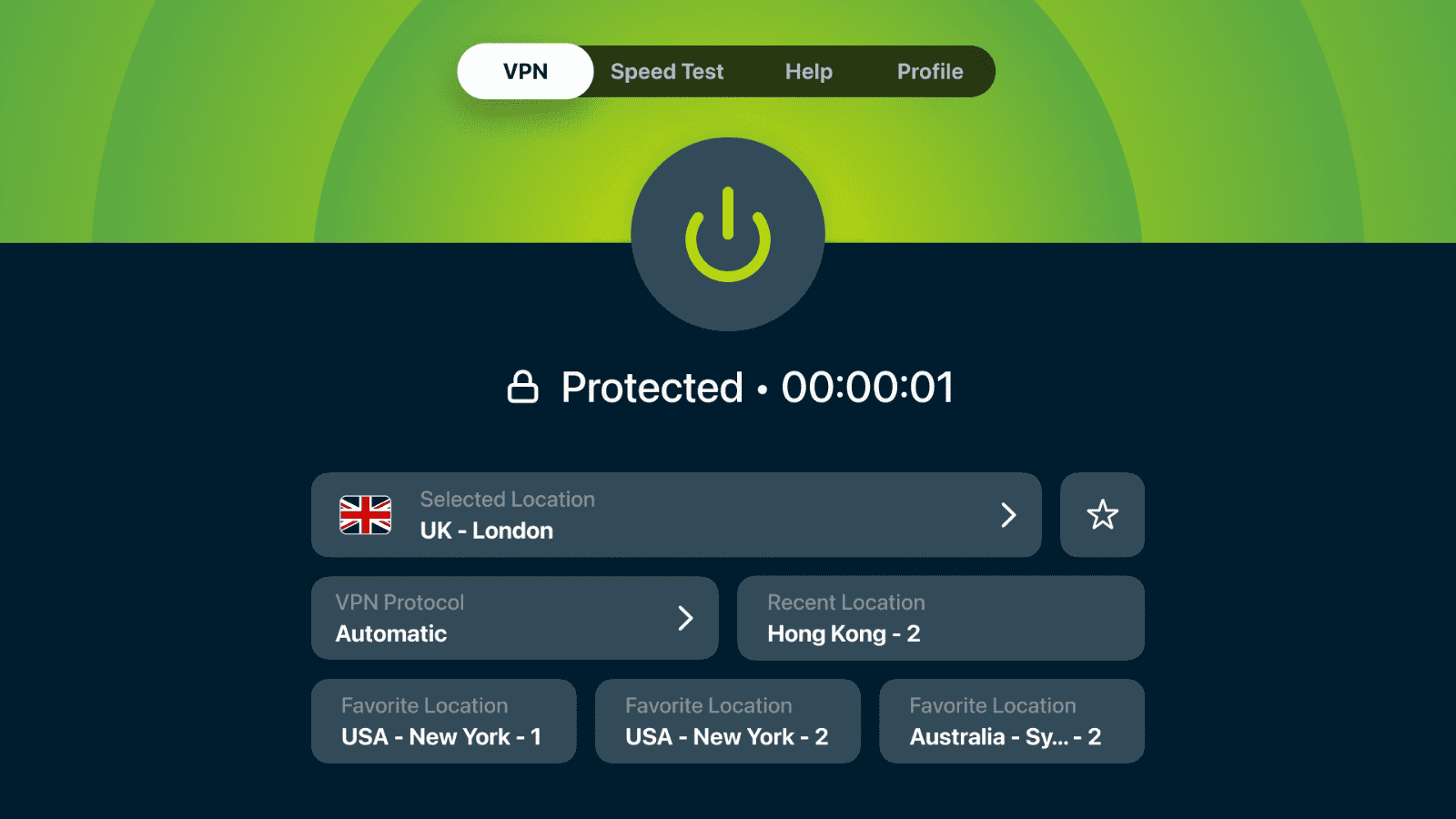

The Digital Services Act isn’t stopping on the take-down action though, as the violation has to be identified. Thus, strict new ‘know-your-customer’ rules will apply, and everything will be traceable. So, if a pirate uploads something on a platform, the service providers linked with that platform will have to provide valid identification data to the authorities. If they fail to do so, they will face the consequences in the form of fines.

The legislative proposals cover the enforcement part with a fine that can go up to 6% of the annual income or turnover of the provider platform. The severity of the violation, the duration, and the frequency are factors to be considered for the magnitude of the fines. Those who simply fail to produce the requested data to the Commission - or provide incomplete or misleading information - will be fined with up to 1% of their annual turnover/income.

And as for what constitutes “illegal content,” the legislation defines this as copyright-infringing content, hate speech, content that may cause distress or harm to others, terrorist content, and anything promoting counterfeit goods. There’s a blurry area around the part about content that is harmful to others, as this could hurt freedom of artistic expression. Still, in general, we’ll have to wait and see which will be the main elements of focus and which were thrown in there to complete the “politically correct” context.